Big Data Services

With practical experience in 30+ domains, ScienceSoft provides big data development, consulting, support and maintenance services. We guarantee a safe project start with a feasibility study and a PoC as well as optimal development costs thanks to our mature processes.

Big data services are aimed at helping companies handle massive-scale data for smooth software operation and reliable analytics insights. With experience in big data since 2013, ScienceSoft provides full-scope big data services. We also apply our experience in AI/ML, data science, business intelligence, and data visualization to maximize the value of our clients' big data initiatives.

Select Your Case

I need a solution to store and analyze large amounts of data from multiple sources

We build systems that consolidate enterprise-wide data in a centralized location optimized for analytics querying and reporting and serve as a single point of truth.

I need to leverage big data to automate business or production operations or get real-time insights

We will build software that supports thousands of requests in real time and enables continuous operations monitoring, automated action triggers, and alerts (e.g., financial fraud detection and transaction blocking, remote patient monitoring, real-time inventory optimization).

I need to plan/develop/upgrade an XaaS app handling data from thousands of users

We build ecommerce, ride-sharing, streaming, dating, gaming, social media, and other apps that enable user-specific real-time recommendations, dynamic pricing, and other personalization features and preserve stable performance under any workload.

- An expert team of architects, developers, DataOps engineers, ISTQB-certified QA engineers, data scientists, project managers, and business analysts with 5–30 years of experience.

- A quality-first approach based on a mature ISO 9001-certified quality management system.

- ISO 27001-certified security management based on comprehensive policies and processes, advanced security technology, and skilled professionals.

- Transparent and flexible pricing.

- We collaborate with companies from 80 countries. Some of our prominent clients include:

Our Big Data Services

You will get assistance for end-to-end big data solution implementation or for separate stages of your IT initiative. You can count on us to deliver a business case (e.g., to verify solution feasibility, create a competition strategy), estimate costs and ROI, design an architecture and recommend an optimal tech stack. We also provide consulting on achieving full security and regulatory compliance and implementing ML/AI-powered capabilities.

You will get a system that automatically scales up and down depending on the load, smoothly fits your existing infrastructure, and is easy to upgrade in the future. We will choose techs that will enable the required performance at an optimal price. For highly complex cases, we can start with a Proof of Concept (PoC) or an MVP. This way, you can make sure of the solution’s feasibility and interact with an intermediate version of the software, provide your feedback, and thus let us adjust the system early on.

Improvement of a big data solution

You can turn to us to fix software inefficiencies or expand it with new capabilities. Our team will audit your system and introduce the required changes or provide you with actionable recommendations on their implementation. E.g., we can customize and configure big data infrastructure techs (like Hadoop, Kafka, Spark, NiFi, Cassandra, and MongoDB) and modernize data processing pipelines to improve solution performance, add/upgrade data encryption mechanisms to eliminate security vulnerabilities, enhance containerization to improve scalability, and more.

Support and maintenance of a big data solution

We can provide you with infrastructure support, solution administration, data cleansing, and other required support and maintenance services. Depending on your choice, you can request either one-time assistance or have our team to continuously monitor your software and fix and prevent issues.

Does Your Data Qualify as Big Data?

The big data term is tricky, as it is seemingly limited to data volume. Your data can deserve the status due to many other factors. Take our simple quiz to find out!

Does your data arrive constantly, at short intervals — up to every 10 minutes?

Do you need to process unstructured data (e.g., texts, images, videos, audios)?

Should your data be processed as soon as it arrives?

Does your solution feature real-time functionality (e.g., immediate notifications to users, fraud detection alerts, automated IoT action triggers)?

Does your business experience constant data and user volume growth?

Please tell us a bit more about your needs

Answer at least 3 questions to get results.

Looks like big data technologies will be a true value driver for you

It's likely that your solution will significantly benefit from big data techs. Tell ScienceSoft's experts about your needs and goals, and we'll be glad to help you with your IT initiative.

Emerging Big Data

Your current data operations may still work, but they’re likely to hit scalability or speed limits soon. It’s the right time to review your analytics architecture and plan for gradual modernization before performance or costs become an issue.

Looks like your data is not "big" yet

Your data workloads can be efficiently handled with traditional analytics and BI tools. The focus now should be on improving data quality, integration, and visualization to extract more business value.

See How Big Data Can be Used in Your Industry

That’s What Your Solution Will Be Like

We don’t know yet what solution you would like to develop, but we can definitely say it will be:

Future-proof

You will get a flexible and efficient big data system that is easy to scale and evolve in the long run. We will provide you with exhaustive software documentation to streamline software maintenance and are ready to stay with you for long-term solution support or train your internal team.

Secure

Relying on our ISO-27001-certified security management system and experience in cybersecurity since 2003, we can establish reliable protection of your big data solution and ensure its full compliance with any required regulations.

Rigorously tested

We develop a tailored QA strategy to ensure smooth software operation and its unfailing performance even under high data load. We also implement a feasible share of test automation, which helps us to reduce testing costs by up to 20%.

Estimate the Cost of Big Data Services

Please answer a few simple questions to let our experts understand your project specifics and give you a tailored pricing estimation.

Thank you for your request!

We will analyze your case and get back to you within a business day to share a ballpark estimate.

In the meantime, would you like to learn more about ScienceSoft?

- Project success no matter what: learn how we make good on our mission.

- 35 years in data management and analytics: check what we do.

- 4,000 successful projects: explore our portfolio.

- 1,300+ incredible clients: read what they say.

Big Data Deployment: Cloud or On-Premises?

Nowadays, cloud deployment is the default option for big data: it’s cheaper and easier to set up, scale, and maintain. But let’s say you operate in a strictly regulated field and have a massive list of privacy requirements — if you need complete control over your data, you’d want to own the physical servers. And on the contrary, some app infrastructures are just too large or dynamic to maintain on your own. If you have unpredictable load spikes or a rapidly growing user base, it’s much safer — both financially and operationally — to let Microsoft or Amazon handle them. There are dozens of other essential factors that differ even between the largest cloud vendors (like data availability, processing speed, and redundancy), so the final choice will always depend on your particular needs.

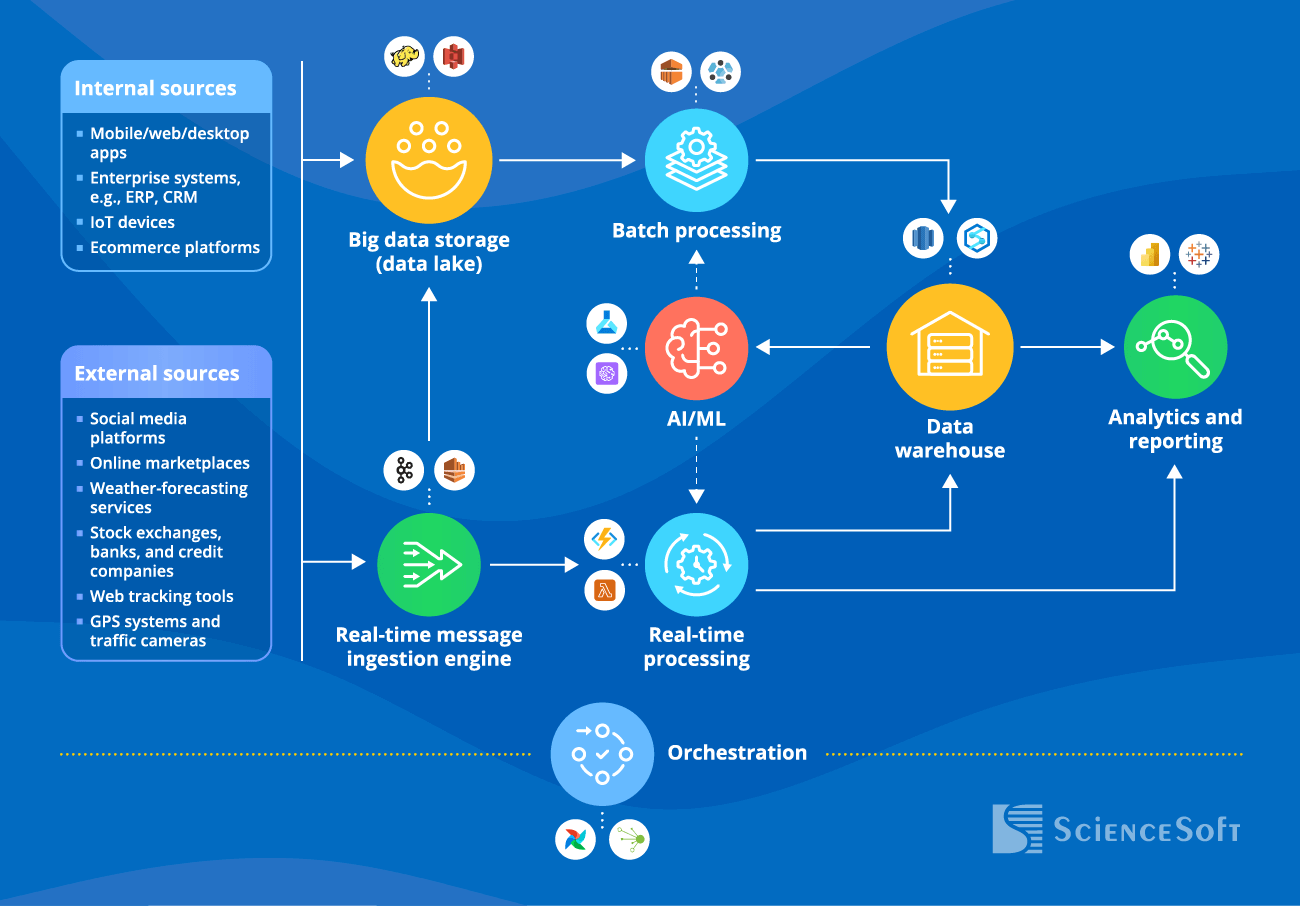

Technical Components of a Big Data Solution We Cover

- A bus layer or aggregation layer collects data from various sources, handles event sequencing, timestamping, and routing.

What are the sources of big data?

- Internal big data sources: customer-facing apps, ecommerce platforms, enterprise systems like CRM, ERP, EHR.

- External big data sources: data from stock exchanges, banks, and credit companies, weather-forecasting services, online marketplaces, web tracking tools, GPS systems and traffic cameras, social media platforms, etc.

Hide

- A data lake stores collected raw data of all types.

What are the types of big data?

There are three main types of big data:

- Structured data: it can be easily organized in tables, e.g., customer demographics data, financial transactions, and sales. Such data is easy to sort for further queries via BI tools.

- Unstructured data can't be organized into any logical structure until it is processed with complex technologies like AI, ML, natural language processing (NLP), and optical character recognition (OCR). The examples of unstructured data include texts, images, videos, and audio recordings. E.g., a company can apply NLP to customer social media posts to understand the sentiment towards the service.

- Semi-structured data is in between the two previous types. On the one hand, its elements can be assigned to certain fields or tags, but on the other hand, these elements are not always ready for querying or analytics. An example of semi-structured data can be an email with a subject line and a message body, where the line and the text will go to the correspondingly tagged fields and later be processed with techniques required for unstructured data.

Hide

- A batch processing layer extracts data from the data storage in a scheduled manner (entails the latency from minutes to hours) and transforms it into analyzable formats to be further processed by the analytics layer.

- A stream processing layer captures real-time data and handles real-time in-memory processing (entails latency from milliseconds to seconds).

- A serving component (a data warehouse) stores processed data.

- A big data governance layer handles data auditing, security, quality, cataloging, metadata management, etc.

Big Data Technologies We Use

Here’s the list of technologies most frequently used in our big data projects. Click on the icon to find out more about our experience in a particular technology.

Our Big Data Clients Are Also Interested In

ScienceSoft combines big data expertise with decades-long experience in software engineering and other advanced technologies to deliver end-to-end big data applications that bring maximum value to their users.

Building highly accurate ML models that identify hidden patterns in big data, provide reliable forecasts, power complex neural networks, and automate complex business algorithms.

Developing personalization engines, natural language processing systems, computer vision, and other AI-powered solutions that maintain stable performance under any data load.

Providing strategic and technological guidance in wrangling, exploring, and applying data, we employ reliable statistical methods, establish robust data quality management processes, and help avoid issues related to inaccurate data and false predictions.

Integrating large volumes of high-velocity data into scalable, fault-tolerant analytics solutions that provide trustworthy insights to any number of users.

Creating easy-to-navigate, customizable reports and dashboards that are tailored to the needs of specific business users and provide a clear and concentrated view of data insights that matter most.

Proficient in Azure, AWS, and GCP, we build cloud big data solutions from scratch and migrate legacy workloads to the cloud to achieve better scalability, cost-efficiency, and availability of our clients’ data.

Frequent Questions About Big Data Services, Answered

How much does big data implementation cost?

Big data implementation costs may vary from $200,000 to $3,000,000 for a mid-sized organization. The pricing depends on such factors as the number of data sources, data volume and complexity, data processing specifics (batch, real-time, or both), requirements for security and compliance, deployment model.

What are the types of big data?

There are three main types of big data:

- Structured data: it can be easily organized in tables, e.g., customer demographics data, financial transactions, and sales. Such data is easy to sort for further queries via BI tools.

- Unstructured data can't be organized into any logical structure until it is processed with complex technologies like AI, ML, natural language processing (NLP), and optical character recognition (OCR). The examples of unstructured data include texts, images, videos, and audio recordings. E.g., a company can apply NLP to customer social media posts to understand the sentiment towards the service.

- Semi-structured data is in between the two previous types. On the one hand, its elements can be assigned to certain fields or tags, but on the other hand, these elements are not always ready for querying or analytics. An example of semi-structured data can be an email with a subject line and a message body, where the line and the text will go to the correspondingly tagged fields and later be processed with techniques required for unstructured data.

What are the sources of big data?

Internal big data sources: customer-facing apps, ecommerce platforms, enterprise systems like CRM, ERP, EHR.

External big data sources: data from stock exchanges, banks, and credit companies, weather-forecasting services, online marketplaces, web tracking tools, GPS systems and traffic cameras, social media platforms, etc.