Extensive Guide to Big Data Application Testing

ScienceSoft has been providing all-around software testing and QA services since 1989 and big data services since 2013.

The Essence of Big Data App Testing

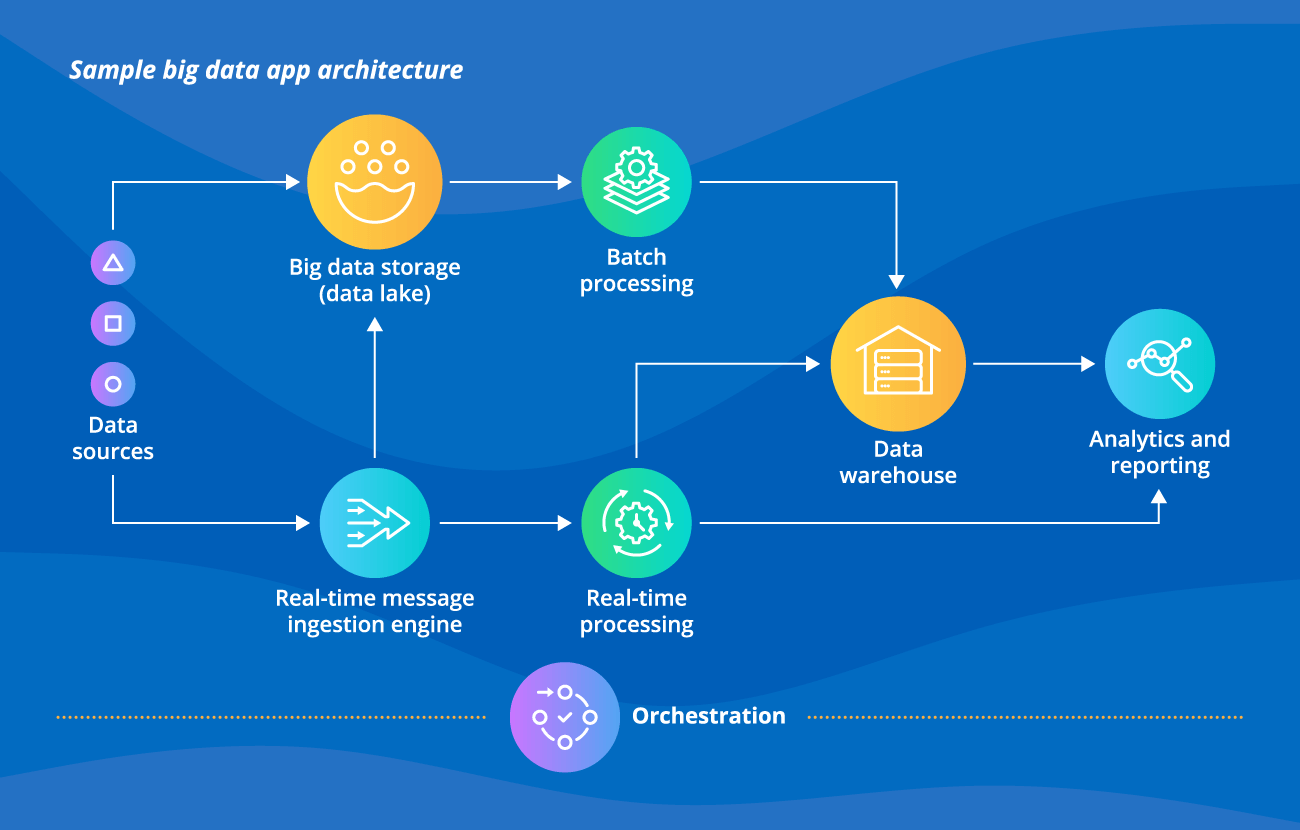

Testing of a big data application combining operational and analytical parts comprises the validation of the app’s correct and smooth functioning (including testing event streaming and analytics workflows, a DWH and a non-relational database, the app’s complex integrations), its availability, response time, optimal resources consumption, data integrity and security.

ScienceSoft’s testing teams rely on solid experience in big data domain and provide smooth and cost-effective big data testing services.

Testing Types Relevant for Big Data Applications

ScienceSoft's QA specialists conduct the following types of testing in big data projects:

A big data application comprising operational and analytical parts requires thorough functional testing on the API level. Initially, the functionality of each big data app component should be validated in isolation.

For example, if your big data operational solution has an event-driven architecture, a test engineer sends test input events to each component of the solution (e.g., a data streaming tool, an event library, a stream processing framework, etc.) validating its output and behavior against requirements. Then, end-to-end functional testing should ensure the flawless functioning of the entire application.

This testing type validates seamless communication of the entire big data application with third-party software, within and between multiple big data application components and the proper compatibility of different technologies used. It is performed with regard to your application’s unique architecture and tech stack.

For example, if your analytics solution comprises technologies from the Hadoop family (like HDFS, YARN, MapReduce, and other applicable tools), a test engineer checks the seamless communication between them and their elements (e.g., HDFS NameNode and DataNodes, YARN ResourceManager and NodeManager).

ScienceSoft's tip: With event-driven applications, the ‘event’ producers and consumers refer to their data schemas for interaction. So, while validating the integrations within an operational part of your big data app, a test engineer together with a data engineer should check that data schemas are properly designed, are inter-compatible initially and remain compatible after any introduced changes.

To ensure the big data application’s stable performance, performance test engineers should:

- Measure the big data app’s actual access latency, the max size of data capacity and processing capacity, response time from different geographical locations (as network throughput may alter through different regions).

- Check the application’s handling of stress load and load spikes.

- Validate the app’s optimal resource consumption.

- Trace the app’s scalability options.

- Run tests validating the core big data application functionality under load.

To ensure the security of large volumes of highly sensitive data, a security test engineer should:

- Validate the encryption standards of data at rest and in transit.

- Validate data isolation and redundancy parameters.

- Detect the app’s architectural issues undermining data security.

- Check the configuration of role-based access control rules.

At a higher level of security provisioning, cybersecurity professionals perform an application and network security scanning and penetration testing.

Test engineers check, whether the big data warehouse perceives SQL queries correctly, and validate the business rules and transformation logic within DWH columns and rows.

BI testing, as a part of DWH testing, helps ensure data integrity within an online analytical processing (OLAP) cube and smooth functioning of OLAP operations (e.g., roll-up, drill-down, slicing and dicing, pivot).

Non-relational database testing

Test engineers check how the database handles queries. Besides, it’s advisable to verify database configuration parameters that may undermine the application’s performance, and data backup and restore process.

ScienceSoft's tip: You should keep in mind that test case design will depend on your solution’s specifics, as different NoSQL databases use different data structures and querying languages.

Big data quality assurance

With big data applications, it’s pointless and unfeasible to strive for complete data consistency, accuracy, auditability, orderliness, and particularly uniqueness (data is replicated by a number of big data app’s components for the sake of fault-tolerance). Still, big data test and data engineers should check if your big data is good enough quality on these potentially problematic levels:

- Batch and stream data ingestion.

- ETL (extract, transform, load) process.

- Data handling within analytics and transactional applications, and analytics middleware.

How to Get Started with Big Data App Testing

1.

Designing the big data application testing process

Assign a QA manager to ensure your big data app’s requirements specification is designed in a testable way. Each big data application requirement should be clear, measurable, and complete; functional requirements can be designed in the form of user stories.

Also, the QA manager designs a KPI suite, including such software testing KPIs as the number of test cases created and run per iteration, the number of defects found per iteration, the number of rejected defects, overall test coverage, defect leakage, and more. Besides, a risk mitigation plan should be created to address possible quality risks in big data application testing.

At this stage, you should outline scenarios and schedules for the communication between the development and testing teams. Thus, test engineers will have a sufficient understanding of the big data app’s schema, which is essential for testing granularity and risk-based testing.

Finally, a QA manager decides on a relevant sourcing model for the big data application testing.

2.

Preparing for big data application testing

Preparation for the big data testing process will differ based on the sourcing model you opt for: in-house testing or outsourced testing.

2.1. Preparing for in-house big data application testing

If you opt for in-house big data app testing, your QA manager outlines a big data testing approach, creates a big data application test strategy and plan, estimates required efforts, arranges training for test engineers and recruits additional QA talents.

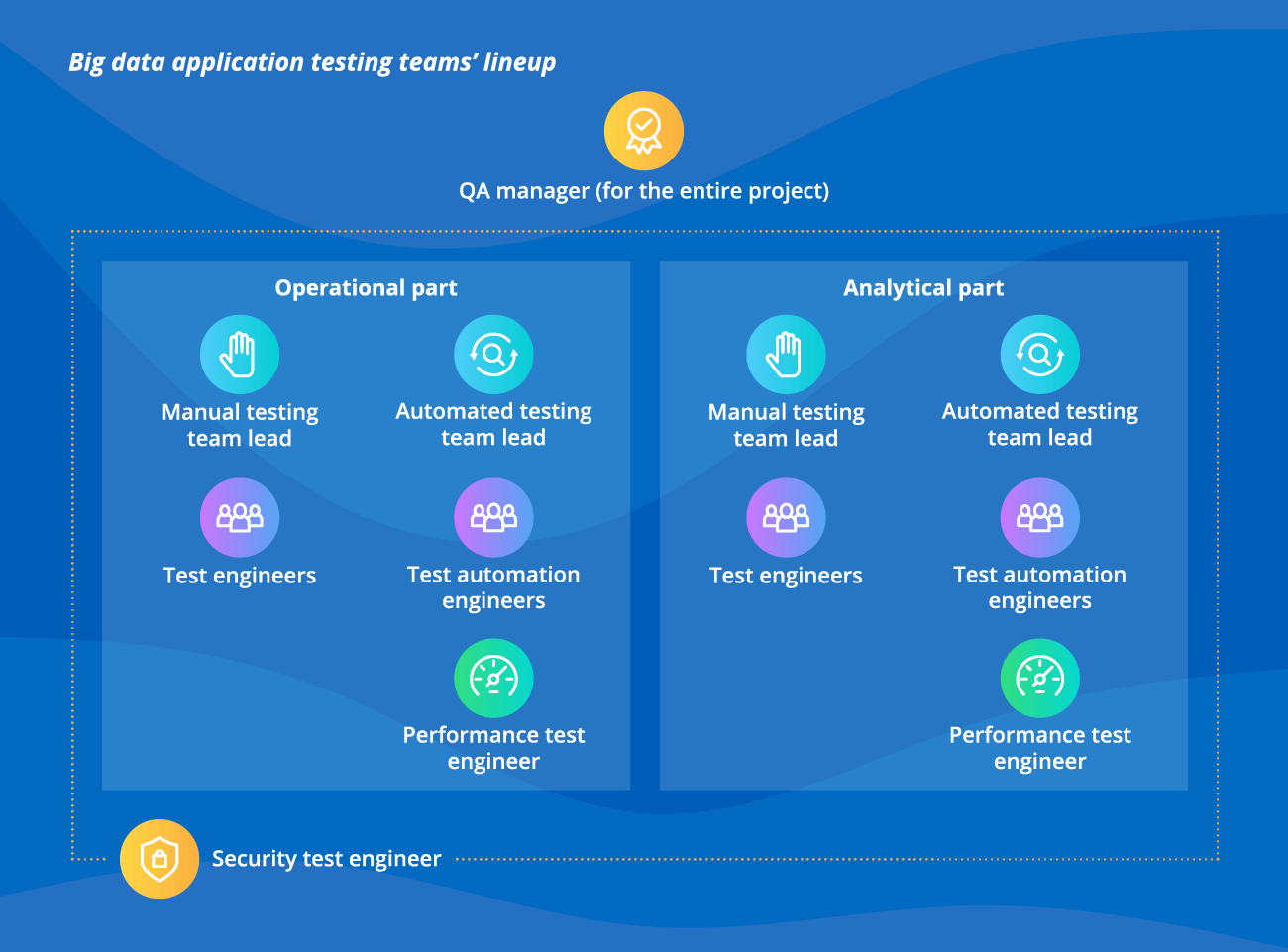

ScienceSoft’s best practice: When testing a big data application, we assemble and manage 2 testing teams. The first team, which consists of the talents who have experience in testing event-driven systems, non-relational database testing, and more, caters to the operational part of the big data application. The second team takes care of the analytical component of the app and comprises talents with experience in big data DWH, analytics middleware and workflows testing.

ScienceSoft’s best practice: Test automation is relevant for big data software testing due to the vast data volume and its variety, complex distributed architecture, which entails a large share of functional, data quality checks, performance, and regression testing. In our projects, for each of the big data testing teams we assign an automated testing lead to design a test automation architecture, select and configure fitting test automation tools and frameworks.

2.2. Selecting a big data application testing vendor

If you lack in-house QA resources to perform big data application testing, you can turn to outsourcing. To choose a reliable vendor, you should:

- Consider vendors with hands-on experience in both operational and analytical big data application testing.

- Review the vendors’ case studies, pay attention to their big data tech stack.

- Consider if vendors have enough testing resources, as a big data application comprising the operational and the analytical part may require two testing teams of a substantial size.

- Consider vendors’ flexibility and scalability. During a big data app’s SDLC, the number of testing teams’ members may be altered for the sake of cost-effectiveness.

- Short-list 3-5 vendors with relevant big data testing experience and technical knowledge.

If a shortlisted vendor lacks some relevant competency, you may consider multi-sourcing.

- Request a big data app testing presentation and cost estimation from the shortlisted vendors.

- Consider the vendors’ approaches to big data testing teams’ lineup, the planned test automation involvement, testing toolkit. Choose the vendor best corresponding to your big data project needs.

- Sign a big data testing collaboration contract and SLA.

During big data application testing, your QA manager should regularly measure the outsourced big data testing progress against the outlined KPIs and mitigate possible communication issues.

3.

Launching big data application testing

Big data app testing can be launched when a test environment and test data management system is set up.

Big data applications’ size makes it unfeasible to replicate an app completely in the test environment. Still, with the test environment not fully replicating the production mode, make sure it provides high capacity distributed storage to run tests at different scale and granularity levels.

The QA manager should design a robust test data architecture and management system that can be easily handled by all teams’ members, will provide clear test data classification, quick scalability options, and flexible test data structure.

Consider Professional Big Data Testing Services

With experience in software testing services, software engineering, and data analytics since 1989, ScienceSoft’s testing professionals can ensure your big data app enables accurate analytics and smooth streaming data workloads.

Big data app testing consulting

ScienceSoft’s QA consultants:

- Design a general test strategy and plan for the entire big data application and test strategies for the operational and analytics parts.

- Create a test automation architecture for your analytics and operational big data app’s parts.

- Select optimal big data testing frameworks and tools based on ROI analysis.

- Provide big data testing efforts’ estimation and costs breakdown.

- Select an optimal sourcing model for your big data app testing project.

- Perform root-cause analysis and the mitigation of big data application testing issues, if your big data app testing project is already on the go.

Outsourced big data app testing

ScienceSoft’s testing experts:

- Design the whole big data testing process: an overall QA strategy and test plan for the big data app and more precise ones for analytics and operational parts; test automation architecture with regard to the specifics of each big data app’s part; optimal testing toolkit to cater to the app’s unique tech stack.

- Set up and maintain the test environment, generate and manage test data.

- Develop, execute, and maintain test cases and scripts to ensure each big data application component is optimally designed and configured, seamlessly communicates with others, and all the components comprise one smoothly functioning application.

- Develop a re-usable automated regression test suite for the big data application risk-free evolution.

Why Choose ScienceSoft for Big Data App Testing

- In software testing since 1989, and in test automation since 2001.

- In big data testing services since 2013.

- ISO 27001-certified to ensure the security of our clients’ sensitive information.

- ISTQB-certified QA engineers.

- Experience in 30+ industries, including healthcare, financial services, retail, wholesale, logistics, oil & gas, and telecommunications.

- Standardized defects description, test cases design, and test reporting in accordance with ISO/IEC/IEEE 29119-3:2013.

ScienceSoft as a reliable software testing provider

Our cooperation with ScienceSoft was a result of a competition with another testing company where the focus was not only on quantity of testing, but very much on quality and the communication with the testers. ScienceSoft came out as the clear winner.

We have worked with the team in very close cooperation ever since and value the professional as well as flexible attitude towards testing.

Roderick Schipper, CTO, helpLine B.V.

Typical Roles in ScienceSoft's Big Data Testing Teams

Testing a big data application comprising the analytical and the operational part usually requires two corresponding testing teams. Below we describe typical testing project roles relevant for both teams. Their specific competencies will differ and depend on the architectural patterns and technologies used within the two big data application parts.

QA manager (for the entire project)

- Ensures testability of a big data application requirements specification, as well as completeness and availability of its architecture and tech stack documents.

- Draws up a big data application test strategy and plan.

- Selects big data test management tools.

- Designs a test data architecture and a test data management system.

- Assembles and helps establish the collaboration between the two testing teams.

- Oversees overall project execution.

Manual testing team lead

- Defines the manual testing scope for the corresponding big data application part.

- Draws up a precise test plan for the respective big data application part.

- Manages test engineers’, solves ongoing issues, and escalates complex problems to a QA manager.

- Measures and analyzes test engineers’ performance and introduces relevant testing process improvements.

Automated testing team lead

- Designs a test automation architecture for the respective big data application part.

- Chooses the appropriate test automation frameworks and tools, or organizes the development of a custom test automation tool relevant for the big data system under test.

- Manages test automation engineers, promotes and evaluates test scripts’ granularity and maintainability.

Test automation engineer

- Develops, runs, and maintains automated UI and API big data test scripts.

- Reports defects after analyzing the test results.

- Creates and maintains a re-usable regression test suite to check the consistency of the relevant big data app part after any introduced changes.

Test engineer

- Studies the big data application’s requirements specification and architecture for better test coverage and granularity.

- Designs, executes, and maintains test cases to validate the big data app’s complex end-to-end user scenarios.

- Reports found defects in a test management tool.

Performance test engineer

- Sets up, maintains, and operates big data performance test environment and tools.

- Collaborates with the big data development team to outline architectural bottlenecks that may disrupt the app’s performance.

- Analyzes performance issues and provides recommendations on their mitigation.

Security test engineer

- Develops a threat model for the big data application to proactively outline potential security issues and help the big data app’s architect and developers address them during the application design.

- Carries out vulnerability assessment (manual evaluation and automated scanning) and penetration testing of the big data application.

- Ranks the detected vulnerabilities with regard to WASC, OWASP, and CVSS classifications and provides recommendations on issues’ mitigation.

Note: the actual number of test automation engineers and test engineers will be subject to the number of the app's components and workflow complexity.

Big Data App Testing Sourcing Models

QA management and testing teams are in-house

- Full control of the big data application testing process.

- The need to swiftly acquire QA talents experienced with testing relevant big data application components and technologies.

- Necessity to quickly scale up and down the testing teams in accordance with the current workload.

QA management is in-house, one or both the testing teams are external

- The ability to promptly alter the number of a vendor’s big data testing talents to keep the test budget lean.

- The need to have a highly skilled QA manager to create an efficient big data application test strategy and plan, guide and improve the big data testing process, evaluate test engineers’ performance, and liaise the communication between the development and testing teams.

QA management and testing teams are outsourced

- Possibility to optimize testing time and costs due to a vendor’s big data testing and test automation proficiency and experienced QA management.

- Quick testing teams’ scalability.

- Increased vendor risks.

Benefits of Cooperation with ScienceSoft

Predictable costs

A professional testing team of balanced number and qualifications along with transparent quotes makes the QA and testing budget coherent and predictable.

Testing transparency

KPI-based service delivery and transparent testing documentation according to ISO/IEC/IEEE 29119-3:2013.

High competence

ScienceSoft’s testing engineers have experience in 30+ industries and multiple business domains.

Big Data App Testing Costs

The testing budget for each big data application is different due to its specific characteristics determining the testing scope.

Factors determining the big data application testing scope:

- Number and type of big data sources.

- Number and type of big data application architectural components (e.g., analytics app and middleware, a stream processing solution, a machine learning system, etc.).

- Big data application technologies’ stack.

- Big data app’s performance requirements (including scalability, reliability, average response time, the number of transactions per unit time, etc.).

- Complexity and number of BI layer functions as well as the number of user roles.

Cost calculation factors for big data application testing include:

For outsourced big data testing

- Size of big data application testing team/teams.

- Testing team members’ rates with regard to their competences and experience.

- Big data application testing time based on:

- Number of iterations (if development and testing go in parallel).

- Total number of test cases/scripts.

- Development and maintenance efforts per test case/script.

- Percentage of test automation.

- Regression test coverage.

For in-house big data testing

- Number of QA and testing professionals.

- Big data testing and QA professionals’ fully loaded salary.

- Additional training for your testing talents, if required.

Additionally, either for outsourced or in-house big data application testing, you should factor in the cost of employed tools (e.g., testing frameworks’ licenses, compute nodes, virtual machines and storage, database and streaming services, etc.).

Though the thorough analysis of these factors is needed to come up with an actual big data app testing cost calculation, a very approximate TCO for testing a big data application with operational and analytical parts will be often around $300,000-$800,000.

Want to know the cost of your big data testing project?

About ScienceSoft

ScienceSoft is a global IT consulting, software development, and QA company headquartered in McKinney, TX, US. We offer QA outsourcing for big data testing projects and help our clients ensure big data application’s quality, security, prompt availability, and robustness while balancing testing costs and time. Being ISO 9001 and ISO 27001 certified, we rely on a mature quality management system and guarantee that cooperation with us does not pose any risks to our clients’ data security.