Low-Latency Applications

Architecture and Key Techs

In software development since 1989, ScienceSoft helps companies across 30+ industries build low-latency applications that provide near real-time response to high volumes of rapidly incoming data.

Global Decision-Makers Push for Lower Latency

Over 1,700 senior IT decision-makers and C-suite executives worldwide participated in a recent Quadrant Strategies Survey dedicated to next-gen applications and edge computing. 56% of them say that in 5 years their mission-critical apps will require <5ms of latency.

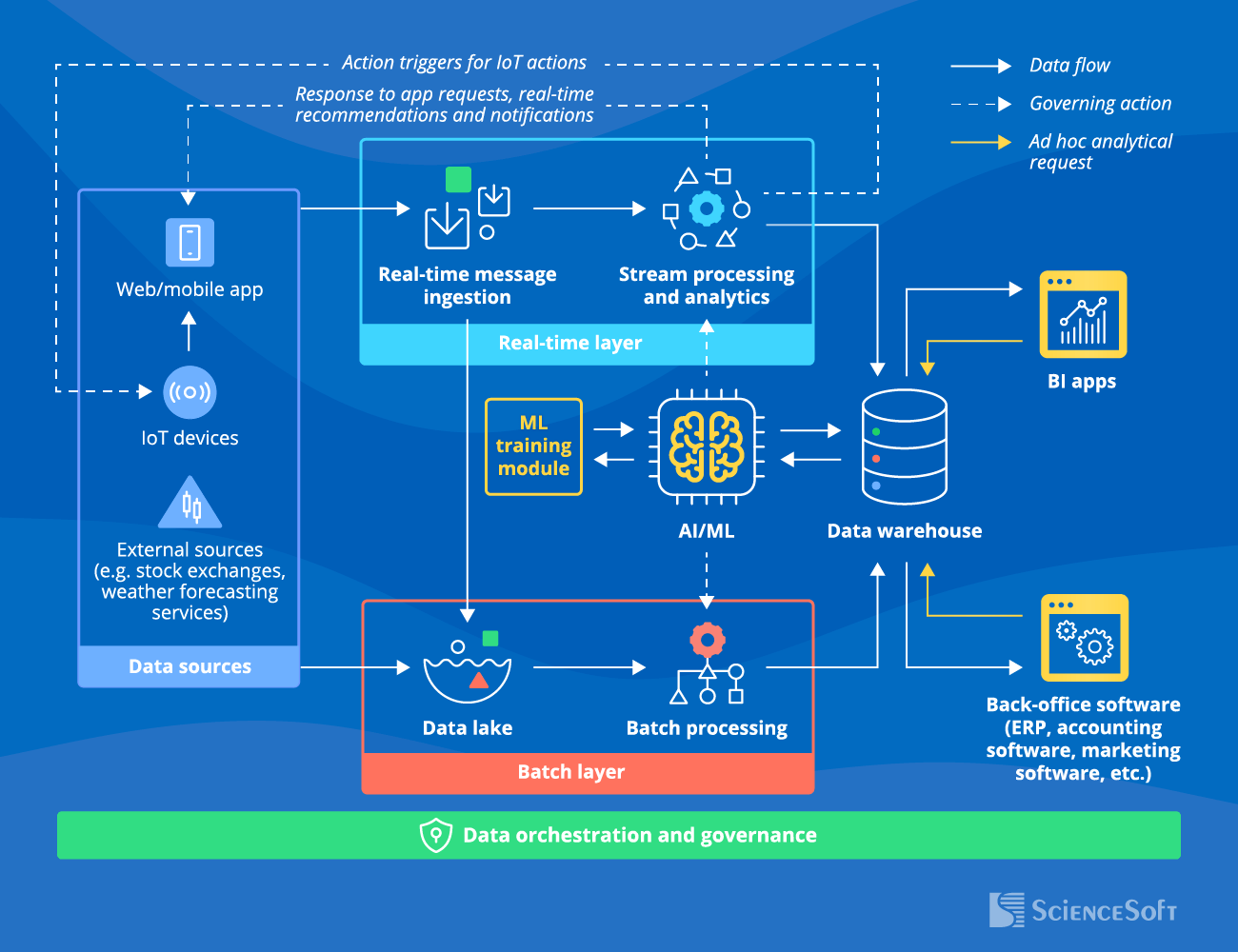

Sample Architecture of a Low-Latency Application

Below, ScienceSoft's data engineers describe a high-level architecture and the key data flows of a low-latency application.

Primary data sources for low-latency systems may include user apps (web and mobile), IoT devices (wearables, sensors, actuators), or external systems (e.g., stock market or weather data feeds).

The data flow is divided into two layers for parallel real-time and batch processing. The real-time layer is responsible for low-latency response to real-time events, while the batch layer enables cost-effective storage and analytics of historical data.

Real-time layer

- Receives the latest data through a real-time (stream) message ingestion engine and sends it on for processing.

- The stream processing and analytics module enables low-latency response to events and real-time output (e.g., adjusting the temperature in a smart home, confirming a payment, offering personalized recommendations to an ecommerce customer).

Batch layer

- Captures historical data in a data lake — the raw storage that holds data in its initial format (structured, unstructured, or semi-structured).

- The batch processing module processes data according to the established computation schedule (e.g., every hour, every 12 hours, every week). It filters, cleans, aggregates, and otherwise prepares data for analytics.

The data warehouse (DWH) stores highly structured data and analytical results received from stream and batch processing modules. The DWH then serves the analytics insights to BI software and back-office systems. Analysts and data scientists can query the DWH for ad hoc data exploration.

The artificial intelligence or machine learning (AI/ML) engine is an optional module that enables advanced analytics capabilities (intelligent forecasting, smart recommendations, dynamic process optimization, etc.). The engine's output goes to the corresponding processing module and triggers a relevant action (e.g., stopping a financial transaction that was identified as fraudulent). The ML engine's accuracy is continuously improved with the help of the machine learning training module.

The data orchestration and governance system automates recurrent data processing actions (e.g., data cleansing and transformation and its movement across the architecture modules) and ensures consistent data quality, security, and compliance throughout its lifecycle.

Why is high latency bad? Spoiler: sometimes it isn’t

First of all, high latency isn’t bad by default: most software doesn’t need response times in single-digit milliseconds to function smoothly. If software owners are willing to settle for fractionally slower output, it’s a valid way to reduce the solution TCO. But there are cases where high latency is a deal-breaker — from online multiplayer games to trading systems, heart monitoring devices, production automation solutions, and so on. Ultimately, before deciding on the latency threshold, you need to look at the purpose of your app and determine the highest priority: data processing speed, user convenience, analytics complexity, optimized costs, or something else. That’s what we discuss with our clients first, and that’s how we design apps that provide optimal latency without becoming a budget black hole.

ScienceSoft: Sharing Decades of Experience for Your Digital Success

- Since 1989 in software engineering, data analytics, and data science.

- Since 2003 in end-to-end big data services.

- Hands-on experience building software for 30+ industries, including healthcare, BFSI, manufacturing, retail, entertainment, and telecoms.

- Solutions architects with 7–20 years of experience.

- Expertise in advanced technologies, including big data, ML, IoT, and blockchain.

- ISO 9001-certified quality management system.

- ISO 27001-certified security management system.

Our awards, certifications, and partnerships

Let's Build a Low-Latency App That Will Drive Your Success

Relying on our mature project management practices, we prioritize project success regardless of time and budget constraints as well as changing requirements.

Frequently Asked Questions

What is latency?

Latency is the time between an event and the system's response to it. Latency depends on the network performance and the approach to data processing (e.g., the pattern of computation and storage resources allocation).

What is a low-latency application?

A low-latency application is a system that responds to events and user requests with consistently low latency (often measured in milliseconds or even microseconds).

What are examples of low-latency apps?

Examples of low-latency software include video conferencing apps (Zoom, Skype), social media and messaging apps (WhatsApp, Instagram), live streaming platforms (Twitch, YouTube Live), navigation apps (Google Maps), ridesharing and delivery apps (Uber, DoorDash), online games (Fortnite, League of Legends), and more.

Why is low latency important, and what are its benefits?

Low latency is important because it is critical for ensuring smooth app performance, timely analytics insights, and immediate action triggers. Some of the benefits of low latency include improved user experience and higher user loyalty, accurate and up-to-date analytics, efficient remote control (for IoT systems), effective emergency response, and more.

How does latency influence performance?

Latency is one of the key metrics used to evaluate app performance. High latency often causes delays in data processing, which results in poor app responsiveness and outdated analytical output.

What are the steps to build a low-latency app?

Since low-latency apps are built for various purposes, there is no go-to manual for developing one. However, we can recommend a few software engineering best practices that enable low latency.

1. Consider using a content delivery network: it reduces latency by serving content from a server closest to the user's location.

2. Use caching: it allows the app to store frequently accessed data locally on the user’s device and to retrieve it from memory instead of sending identical requests to the server every time.

3. Ensure sufficient resources: reliably low latency cannot be achieved when the capacity is based on average load values, so providing headroom for traffic spikes and other unexpected load increase cases is crucial.

4. Implement load balancing to distribute traffic across multiple servers and avoid single-server overload.

For more detailed information on software development stages and best practices, check out our dedicated guide.

May Your Low-Latency App Bring Lightning-Fast Results!

And if you need expert help to implement an optimal solution, ScienceSoft is ready to support you in this journey.