How to Build a Data Warehouse from Scratch

Approaches, Plan, Software, and Costs

Since 2005, ScienceSoft has been providing data warehousing services to help companies build cost-effective and high-performing data warehousing solutions.

Building a Data Warehouse: the Summary

The major steps for building a data warehouse include requirements engineering, solution conceptualization, project roadmap development, solution design, DWH platform customization, ETL/ELT pipelines creation, DWH performance monitoring and adjustment. Below, you can see a summary of the required steps or explore a more detailed guide further down the page.

How to build a data warehouse in 7 steps:

- Elicit DWH goals at the company, team, and business user levels.

- Conceptualize DWH features and select the optimal platform.

- Create a business case and develop a project roadmap.

- Design data cleansing and security policies, data models, and the core architecture components.

- Customize the selected DWH platform and develop ETL/ELT pipelines.

- Migrate data to the DWH.

- Tune ETL/ELT pipelines and adjust DWH performance.

Project time: From 3 to 12 months.

Cost: Starts from $70,000. Feel free to use our online calculator to get a tailored ballpark estimate.

Team: A project manager, a business analyst, a data warehouse system analyst, a data warehouse solution architect, a data engineer, a quality assurance engineer, a DevOps engineer.

Approaches to Building a Data Warehouse

A typical architecture of a data warehouse solution includes the following layers:

Data source layer

– internal and external data sources.

Staging area

– a temporary area where data transformations take place. Absent if data transformations are performed in the data storage layer.

Data storage layer

– hosts a data warehouse database (a central database for storing a company’s data) and data marts (data warehousing subsets for storing data for a particular business line – finance, marketing, HR, etc.).

The data in the data warehouse database and data marts can then be queried via OLAP tools, data mining tools, reporting, and visualization tools.

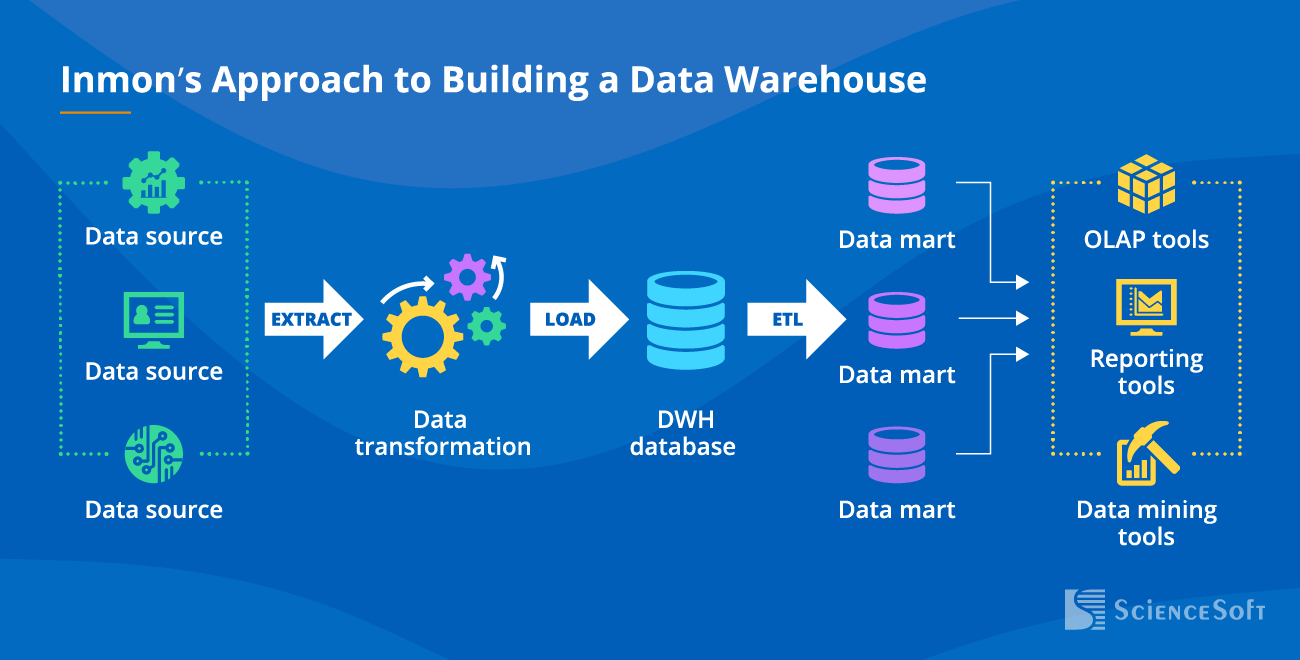

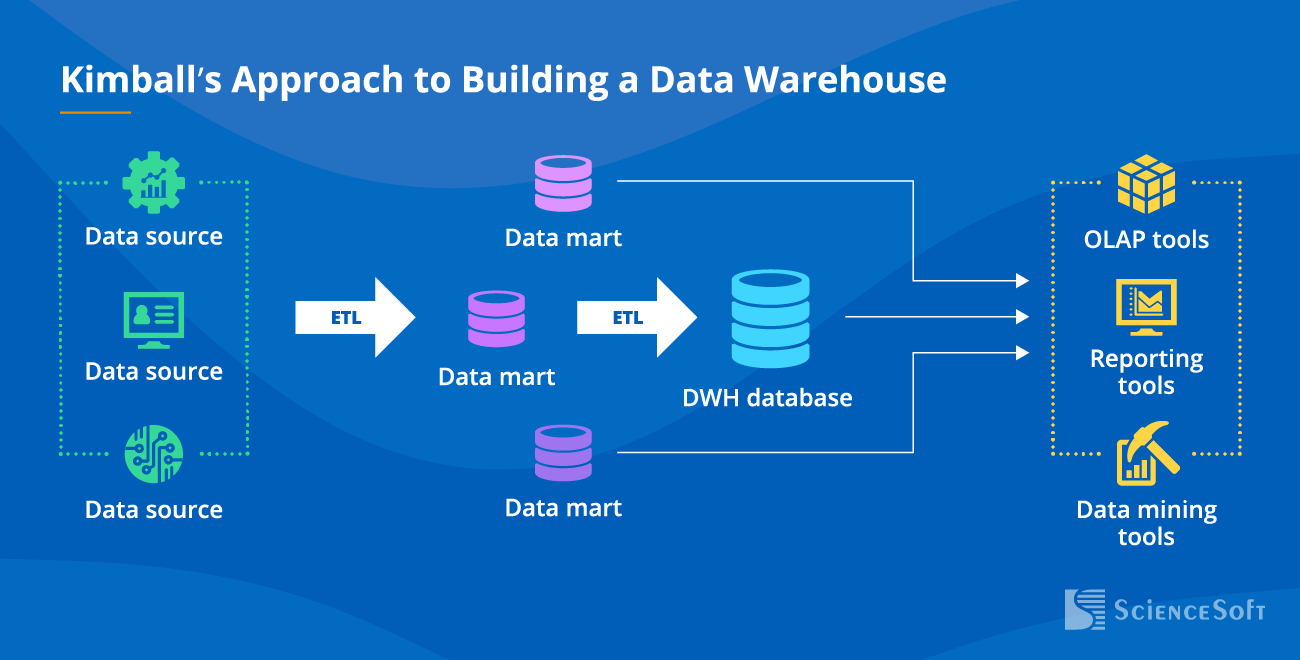

Different approaches to building a data warehouse concentrate on the data storage layer:

-

Inmon’s approach – designing centralized storage first and then creating data marts from the summarized data warehouse data and metadata.

- Kimball’s approach – creating data marts first and then developing a data warehouse database incrementally from independent data marts.

Data Warehouse Use Cases

Data warehouse adoption can help draw meaningful insights for financial planning and modeling, operational performance monitoring, risk management, and more. It can also be implemented for operational use cases like predictive maintenance, remote monitoring (e.g., of patient state, production processes), service personalization, etc.

Strategic decision-making

- Strategic reports and dashboards for top management.

- Financial performance data monitoring and benchmarking.

- Financial forecasting and strategic investment planning.

- Profitability analysis of customers and products.

- Strategic sourcing.

- Employee and team performance assessment and planning.

Data sources:

- Business management systems (ERP, CRM, CMS, EAM, PIM, etc.).

- External data (benchmarking data, surveys, public data, etc.).

- Multi-user role-based reports and dashboards.

- Consistency of overall corporate planning and planning for specific business areas.

- Budget allocation.

- Financial simulation and scenario considerations, contingency plans for a range of possible events.

Data sources:

- Operational data from functional areas (supply chain, production, marketing and sales, etc.).

- Financial data stores (ERP, financial management system, accounting system, etc.).

- Financial and operational performance reports, scorecards and dashboards for managers.

- Organization, team, employee or process performance tracking.

- Identification of business productivity, employee attrition, etc. drivers.

- Performance gaps anticipation, root cause analysis.

- Strategies for performance optimization for sales funnel, marketing campaigns, supply chain, etc.

Data sources: Business management systems (ERP, accounting management, CRM, supply chain management, etc.).

Tactical decision-making

- Tactical dashboards for managers and directors with continuously updated business data.

- Time-sensitive analytical querying to support production planning, inventory planning, logistic management, etc.

Data sources: Management information systems (inventory control, sales and marketing, accounting and finance, production, logistics, fleet, etc.).

- Operational dashboards for fast querying of large and granular transactional data in real time.

- Data-driven decision-making in the operational environment (order entry, banking operations, travel reservations, etc.).

- Alerting to the situations requiring immediate attention (risk management, fraud detection, etc.).

- Constantly updated operational forecasts and business outcome simulations in real time.

Data sources: historical and real-time data from transactional data stores.

- Reacting (e.g., triggering an alert) to particular events or a sequence of events in real time or near real time.

- Detecting event patterns and predicting reactions based on historical IoT data analysis.

- Predictive maintenance.

- Vehicle telematics.

- Smart building.

- Smart devices and wearables.

- Smart metering.

Data sources: IoT devices.

SaaS, XaaS, online services

- Support for data load scalability.

- Instant analytical querying of huge app data volumes.

- Support for machine learning capabilities (personalization, chatbots, etc.).

Data sources: applications, data and backup storage systems.

7 Steps to Building a Data Warehouse from Scratch

The suggested plan is based on ScienceSoft’s 20-year experience in data warehousing services and features the usual procedure we follow when implementing a DWH. Notice that the project timeframes are approximate, as the duration of the data warehouse development process depends on a variety of factors, including the complexity and quality of data in source systems, data security requirements, data analytics objectives, etc.

Step 1.

Determine the goals

- Discovery of your business objectives (tactical and strategic) to be pursued with the data warehouse development project.

- Identification and prioritization of the company’s, teams’, business users’ expectations and needs from the project.

- Review of the company’s current technological architecture, applications in use, etc.

- Conducting a preliminary data source analysis (data type and structure, volume, sensitivity, etc.).

- Outlining the data warehouse scope and high-level system requirements, including security and compliance requirements: GDPR (for the EU), PDPL (for Saudi Arabia), HIPAA (for the healthcare industry), etc.

Step 2.

Develop a concept and choose the platform

- Defining the desired data warehouse solution feature set.

- Choosing the optimal deployment option (on-premises/in-cloud/hybrid).

- Choosing the optimal architectural design approach to building a data warehouse.

- Selecting the data warehouse technologies (DWH database, ETL/ELT tools, data modeling tools, etc.), taking into account:

- Number of data sources and data volume to be loaded into the data warehouse.

- Data flows to be implemented.

- Data security requirements.

Close cooperation of business users with a BA and a solution architect while defining the core and advanced functionality of the future solution helps avoid overcomplicating the data warehouse architecture and select the most cost-effective tech stack.

Step 3.

Create a business case and a project roadmap

Major activities include:

- Defining data warehouse development project scope, budget planning, timeline, etc.

- Scheduling DWH design, development and testing activities.

- Drawing up a data warehouse project scope document, data warehouse solution architecture vision document, data warehouse deployment strategy, testing strategy, project implementation roadmap.

- Developing a risk management plan.

- Estimating efforts for the data warehouse development project, TCO and ROI.

Rigorous planning of a data warehouse development project helps reduce up to 30% of project time and budget, so ScienceSoft’s team carefully elaborates on the findings of the preceding stages.

Step 4.

Analyze the system and design the architecture

- Detailed analysis of each data source:

- Data type and structure (data models, if any).

- Data volume generated daily.

- Degree of data sensitivity and an applied data access approach.

- Data quality, missing/poor data, possibility to perform data cleansing in the data source system.

- Identification if any data is absent/of enough quality to support the business requirements.

- Frequency of data updates.

- Relation to other data sources.

- Designing data cleansing policies.

- Creating data security policies (data access policies based on legal restrictions and data security rules, data encryption policies, policies for data access monitoring and data compliance, data backup strategy, etc.)

- Designing data models for the data warehouse and data marts.

- Identifying data objects as entities or attributes; identifying relationships between entities.

- Mapping data objects into the data warehouse.

- Designing ETL/ELT processes for data integration and data flow control.

To create a blueprint for the data ecosystem fully tailored to the customer’s particular business needs, ScienceSoft engages senior-level system analysts with considerable experience in the corresponding industry.

Step 5.

Develop and stabilize the solution

- Data warehouse platform customization.

- Configuring data security software and implementing data security policies (applying data security policies to data at the row, column, etc. level, developing custom security procedures, and more).

- Developing ETL/ELT pipelines and ETL/ELT testing.

- Data warehouse performance testing.

To ensure the speed and frequency of releases without sacrificing the solution’s quality, ScienceSoft follows the DevOps-driven iterative development approach.

Step 6.

Launch the solution

- Data migration, data quality assessment.

- Introducing the data warehouse to business users.

- Having user acceptance tests.

- Conducting user training sessions and workshops.

Step 7.

Ensure after-launch support

- ETL/ELT performance tuning.

- Adjusting data warehouse performance and availability.

- Supporting end users.

Consider Professional Services for Data Warehouse Development

Since 2005, ScienceSoft has been providing a full range of data warehouse consulting and development services to help companies build a cost-efficient and scalable data warehouse solution to address their data management and analytics needs. With established project management practices, we drive projects to their goals regardless of time and budget constraints.

Data warehouse consulting

- Requirements engineering.

- Business case creation, recommendations on data warehouse price optimization.

- Data warehouse conceptualization and software selection.

- Solution architecture design.

- Data governance design.

- Data warehouse system analysis.

- Data modeling and ETL/ELT design.

Data warehouse development

- Data warehouse requirements engineering.

- Solution conceptualization and platform selection.

- Solution architecture design.

- Data warehouse system analysis.

- Data modeling and ETL/ELT design.

- Solution development.

- Data warehouse quality assurance and launch.

- After-launch support.

ScienceSoft as a Trusted DWH Partner

When we first contacted ScienceSoft, we needed expert advice on the creation of the centralized analytical solution to achieve company-wide transparent analytics and reporting. After a series of interviews, ScienceSoft’s consultants analyzed our workloads, documentation, and the existing infrastructure and provided us with a clear project roadmap.

They stayed in daily contact with us, which allowed us to adjust the scope of works promptly and implement new requirements on the fly. Additionally, the team delivered demos every other week so that we could be sure that the system aligned with our business needs.

Heather Owen Nigl, Chief Financial Officer, Alta Resources

Talents Required for Building a Data Warehouse

The responsibilities of the data warehouse development team cover project management, business and system analysis, architecture design, tech stack selection, quality assurance, and more. Each role contributes to different stages of the process, from solution design to its implementation, testing, and maintenance.

Project manager

- Outlining data warehouse development project scope and objectives, determining deliverables for each project step, and ensuring their delivery.

- Providing estimations for the data warehouse development efforts and managing resources.

- Data warehouse development project scheduling, delivering project updates to stakeholders.

- Measuring project performance and identifying areas of improvement.

Business analyst

- Defining functional and non-functional data warehouse requirements, data warehouse limitations.

- Documenting the scope of the data warehouse solution, its constituents, integration into the existing analytics environment (if any).

Data warehouse system analyst

- Analyzing data sources and data analytics software (if any).

- Defining a data warehouse system requirements specification for creating data models, designing ETL/ELT processes.

- Auditing the quality of data loaded into the data warehouse.

Data warehouse solution architect

- Designing a data warehouse solution architecture.

- Creating a data governance strategy, policies, and standards.

- Suggesting the data warehouse tech stack.

Data engineer

- Developing data models and their structures.

- Developing and maintaining a data pipeline to route source data to the data warehouse.

- Building the ETL/ELT process.

Quality assurance engineer

- Reviewing data warehouse tech design documents.

- Designing a test strategy.

- Designing, developing, and maintaining tests to evaluate the developed data warehouse solution.

DevOps engineer

- Setting up the data warehouse software development infrastructure.

- Introducing continuous integration/continuous deployment (CI/CD) pipelines to automate and streamline data warehouse development processes.

Sourcing Models

Below, you can compare pros and cons of building a data warehouse in-house, outsourcing the initiative to external specialists, and combining in-house management with outsourced development.

In-house data warehouse development

- Hands-on knowledge of data context.

- A one-time job requiring several team roles.

- Risk of project delays if a team member quits.

In-house management and outsourced development

- Quick tweaking the project in case of any internal business changes.

- Stable development process.

- Remote and often multi-lingual cooperation between project management and a development team.

Outsourced data warehouse development

- Hourly rate and result-based payment instead of a fixed salary.

- Variable team capacity driven by project needs.

- Potentially wider experience with DWH platforms.

- External access to corporate data.

Data Warehouse Software Worth Attention

If you are looking for the industry-best data warehousing platforms, explore our list of the best data management solutions for analytics we use in our projects. Each of the data warehousing services presented here is among leaders and strong performance according to The Forrester Wave and Gartner’s 2025 evaluation of cloud databases for analytics reports and offers you a platform for building a modern, high-performing data warehouse.

Amazon Redshift

Best for: big data warehouse

Description

- Integrations with the AWS ecosystem (Amazon S3, Amazon Athena, Amazon SageMaker, Amazon EMR, etc.)

- Integration with third-party ETL/ELT, BI, data modeling, etc. tools (Power BI, Tableau, Informatica, Qlik, Talend Cloud, and more).

- Fast query processing with AQUA (Advanced Query Accelerator), data compression, materialized views, and result caching.

- ML-optimized performance under varying workloads.

- Automated infrastructure provisioning, table design, backups and cluster health monitoring.

- SQL data querying (including big data).

- Data encryption in transit and at rest.

- Fine-grained access control.

- HIPAA-eligible.

- Separate scaling of compute and storage.

Pricing

-

On-demand pricing: $0.25/hour (dc2.large) - $13.04/hour (ra3.16xlarge).

-

Reserved instance pricing can save up to 57% over the on-demand option (in a 3-year term).

-

Data storage (RA3 node types): $0.024/GB/month.

Azure Synapse Analytics

Best for: advanced data management

Description

- SQL querying of structured, semi-structured, unstructured data types.

- Multilanguage support (T-SQL, Python, Scala, Spark SQL, .NET).

- Native integrations with Apache Spark, Power BI, Azure ML, Azure Stream Analytics, Azure Cognitive Services, Azure Cosmos DB, etc.

- Integration with third-party BI tools, including Tableau, SAS, Qlik, etc.

- Result-set caching, workload isolation and clustered columnstore indexes.

- Automatic restore points and backups.

- End-to-end data encryption, dynamic data masking, granular access control.

- HIPAA-eligible.

- Separate scaling of compute and storage resources.

Pricing

- Compute on-demand pricing: $1.20/hour (DW100c) - $360/hour (DW30000c).

- Compute reserved instance pricing can save up to 65% over the on-demand option (in a 3-year term).

- Data storage: $23/TB/month ($0.04/TB/hour).

Oracle Autonomous Data Warehouse

Best for: hybrid DWH

Description

- SQL querying of structured, semi-structured, unstructured data.

- Native integration with Oracle Cloud Infrastructure Object Storage and Oracle Analytics Desktop.

- Integration with Azure Blob Storage, Amazon S3.

- Connection with custom applications and third-party products via SQL*Net, JDBC, ODBC.

- Deployment flexibility (Oracle public cloud (shared/dedicated infrastructure) or a customer’s data center).

- Automated scaling, patching and upgrades, backups and recovery.

- High query processing with continuous query optimization, table indexing, data summaries, and auto-tuning.

- Data encryption at rest and in transit.

- Multifactor authentication.

- HIPAA-eligible.

- Separate scaling of storage and compute resources.

Pricing

- Compute: $0.336/ECPU/hour.

- Data storage: $0.0244/GB/month (in the public cloud).

Data Warehouse Development Cost Estimation

The cost of data warehouse building may vary from $70,000 to $1,000,000+, depending on software complexity. The major factors include:

- The number of data sources (ERP, CRM, SCM, etc.)

- Data disparity across different sources (for example, difference in data structure, format, and use of values).

- Data source complexity (for example, big data, streaming data).

- Data volume to be processed and stored.

- Data sensitivity and data security requirements.

- The number of data flows and the number of entities (“clients”, “salary”, “transactions”, etc.) to be integrated into the data warehouse.

- Data warehouse performance requirements (velocity, scalability, etc.).

The final cost of a data warehouse development project may vary as follows:

$70,000 – $200,000*

A basic solution with up to 5 data sources, rule-based analytics of structured data, and scheduled reporting via market-available BI tools.

$200,000 – $400,000*

A solution of medium complexity that integrates up to 15 data sources. It enables both batch and real-time data processing, rule-based and ML-powered analytics.

$400,000 – $1,000,000*

An advanced solution that consolidates data from all the required data sources. It supports batch and real-time processing of all data types, including big data, and features ML/AI modules for predictive and prescriptive analytics.

*Monthly software license fee and other regular fees are NOT included.

Benefits of Building a Data Warehouse

- Automated data management procedures (data collection, transformation, cleansing, structuring) for increased data quality and reliability.

- Unified approach to data security.

- A platform ready for advanced analytics initiatives.

- A data-driven culture across the company.

About ScienceSoft

ScienceSoft is a global IT consulting and software development company headquartered in McKinney, TX, US. Since 2005, we’ve been offering data warehousing services to help businesses develop full-scale data warehouse solutions and advise on how to ensure the success of the data warehouse development process. Being ISO 9001 and ISO 27001-certified, we rely on a mature quality management system and guarantee cooperation with us does not pose any risks to our clients’ data security.