Data Analytics System Enabling Cross Analysis of 30,000 Attributes and 100x Faster Reporting

Customer

The Customer is a leading market research company.

Challenge

Though having a robust analytical system, the Customer believed that it would not be able to satisfy the company’s future needs. Acknowledging this situation, the Customer was keeping their eyes open for a future-focused innovative solution. A system-to-be was to cope with the continuously growing amount of data, to analyze big data faster and enable comprehensive advertising channel analysis.

After deciding on the system’s-to-be architecture, the Customer was searching for a highly qualified and experienced team to implement the project. Satisfied with a long-lasting cooperation with ScienceSoft, the Customer addressed our consultants to do the entire migration from the old analytical system to a new one.

Solution

During the project, the Customer’s business intelligence architects were cooperating closely with ScienceSoft’s big data team. The former designed an idea, and the latter was responsible for its implementation.

For the new analytical system, the Customer’s architects selected the following frameworks:

- Apache Hadoop – for data storage;

- Apache Hive – for data aggregation, query and analysis;

- Apache Spark – for data processing.

Amazon Web Services and Microsoft Azure were selected as cloud computing platforms.

Upon the Customer’s request, during the migration, the old system and the new one were operating in parallel.

Overall, the solution included five main modules:

- Data preparation

- Staging

- Data warehouse 1

- Data warehouse 2

- Desktop application

Data preparation

The system has been supplied with raw data taken from multiple sources, such as TV views, mobile devices browsing history, website visits data and surveys. To enable the system to process more than 1,000 different types of raw data (archives, XLS, TXT, etc.), data preparation included the following stages coded in Python:

- Data transformation

- Data parsing

- Data merging

- Data loading into the system.

Staging

Apache Hive formed the core of that module. At that stage, data structure was similar to raw data structure and had no established connections between respondents from different sources, for example, TV and internet.

Data warehouse 1

Similar to the previous block, that one also based on Apache Hive. There, data mapping took place. For example, the system processed the respondents’ data for radio, TV, internet and newspaper sources and linked users’ ID from different data sources according to the mapping rules. ETL for that block was written in Python.

Data warehouse 2

With Apache Hive and Spark as a core, the block guaranteed data processing on the fly according to the business logic: it calculated sums, averages, probabilities, etc. Spark’s DataFrames were used to process SQL queries from the desktop app. ETL was coded in Scala. Besides, Spark allowed filtering query results according to access rights granted to the system’s users.

Desktop application

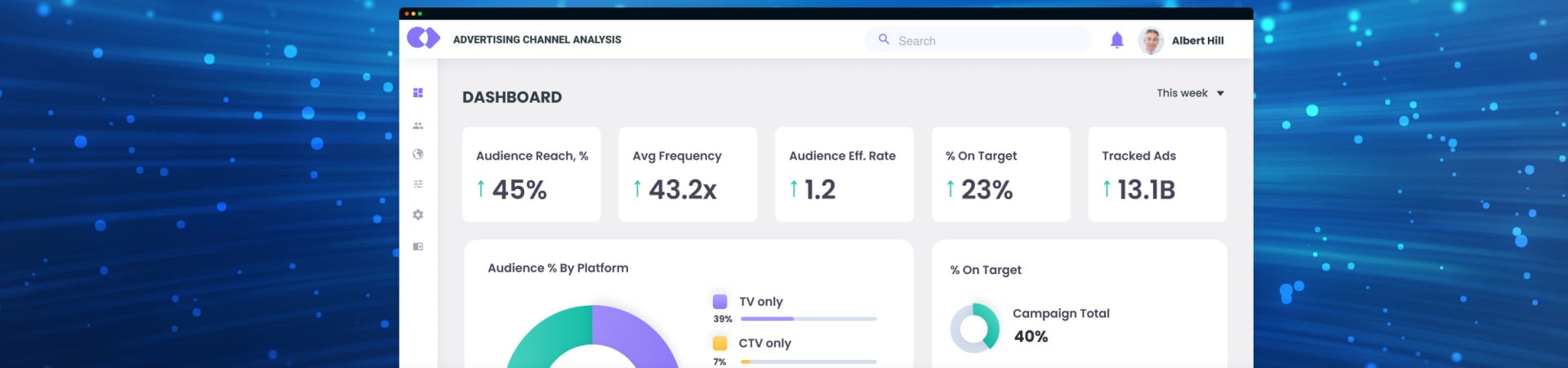

Utilizing the power of WPF and C#, our team developed a comprehensive desktop application that facilitated an in-depth cross-analysis of nearly 30,000 attributes, creating detailed intersection matrices for diverse market analytics. By adopting the MVVM architectural pattern, we ensured a clean separation of concerns that simplified future app enhancements and maintenance. Users benefited from a variety of standard and ad hoc reports, such as Reach Ranking and Share of Time, with the flexibility to define custom parameters for personalized insights. The application's responsive design, crafted with XAML, allowed for the presentation of complex data through simple, interactive charts. Moreover, the system offered forecasting features; for instance, it could predict revenue based on expected reach and advertising budgets.

Results

At the project closing stage, the new system was able to process several queries up to 100 times faster than the outdated solution. With the valuable insights that the analysis of almost 30,000 attributes brought, the Customer was able to carry out comprehensive advertising channel analysis for different markets.

Technologies and Tools

Apache Hadoop, Apache Hive, Apache Spark, Python (ETL), Scala (Spark, ETL), SQL (ETL), Amazon Web Services (Cloud storage), Microsoft Azure (Cloud storage), .NET, WPF, C#.