AI for Mental Health

Software Architecture, Challenges, Costs

With ISO 13485, ISO 9001, and ISO 27001 certifications and 150+ healthcare projects, ScienceSoft designs secure and accurate AI-powered software for mental health care providers and startups.

AI for Mental Health in a Nutshell

AI-driven mental health solutions can increase access to mental health services by up to 30% [Mind Matters Surrey NHS] and detect mental conditions with 63 to 92% accuracy [a systemic review written by researchers from IBM and the University of California]. When used for emotional support, AI-powered mental health chatbots have been shown to reduce psychological distress by 70% [a meta-analysis by researchers from the National University of Singapore].

Custom AI software for mental health allows mental health organizations and startups to get a solution with tailored algorithms that address specific practice needs and specialized treatment approaches.

Mental Health AI Market Overview

The global AI market for mental health was estimated at $1.45 billion in 2024 and is projected to grow at a CAGR of 24.15%, reaching $11.84 billion by 2034. The major market drivers include the increasing prevalence of mental disorders and heightened awareness of mental health as a significant health concern.

Use Cases of AI in Mental Health

Early detection tools

AI-powered tools can analyze speech (e.g., changes in tone, pitch), text (e.g., words that indicate certain emotions, the sentiment of a text), and physiological data (e.g., heart rate variability, galvanic skin response) to detect mental health and neurological conditions. Research suggests such approaches can detect emerging issues earlier than standard clinical methods, helping clinicians prioritize cases and intervene sooner.

Emotional support chatbots and virtual companions

AI-powered conversational tools provide non-clinical emotional support through structured dialogue and evidence-based techniques such as CBT or mindfulness exercises. Language models enable these systems to interpret user input and generate context-aware responses, while predefined frameworks and thresholds ensure safe and consistent interactions. For example, Woebot follows rule-based therapeutic principles, while Earkick uses language models to deliver more adaptive, personalized support.

Intake, triage, and care navigation

AI can support intake and care navigation by collecting patient information and structuring it for clinical review. Language models help interpret free-text chat responses, extract relevant details from referral notes, and generate easy-to-scan intake summaries. This allows teams to prioritize cases, match patients to appropriate services, and reduce the time spent reviewing initial assessments.

Therapy documentation and recordkeeping assistants

Therapists can use LLM-based agents to transcribe therapy sessions into structured progress notes, summarize records, and identify key facts or inaccuracies in the chart. Such assistants can offer capabilities that help therapists retrieve patient data from a mental health EHR system more efficiently, such as voice commands, smart entry search suggestions, and text-to-speech. This helps reduce documentation burden and ensures records remain accurate, complete, and aligned with ongoing care.

Billing support

AI can support back-office workflows such as prior authorization, coding, and billing in mental health settings. Language models can extract relevant details from session notes, map them to billing codes, and prepare supporting documentation for claims or authorization requests. This helps reduce manual effort, improve coding accuracy, and ensure that records meet payer-specific requirements, including time-based and session-based billing rules.

Mental health monitoring

AI can support ongoing mental health care by analyzing patient-reported outcomes, session data, and behavioral signals from apps or wearables over time. Machine learning models identify trends, deviations, or early signs of relapse, while language models help summarize changes and highlight clinically relevant patterns across multiple data sources. This enables more continuous, data-driven assessment between visits and supports timely intervention when a patient’s condition shifts.

Tailored non-clinical content

In non-clinical settings (e.g., mindfulness and stress management apps), AI can be used to adapt content based on user behavior and preferences. Machine learning models analyze engagement patterns to surface more fitting mindfulness exercises, journaling prompts, or stress management techniques. This helps increase user engagement and supports consistent self-care in everyday life.

Therapy quality assessment

AI can help monitor the quality of therapy sessions by analyzing conversations between therapists and patients. One real-life example is Lyssn’s algorithm that evaluates text messages and transcribed audio/video calls for the use of evidence-based practices such as motivational interviewing and CBT versus unrelated dialogue. Such tools can support supervision, quality assurance, and clinician training.

How It Works

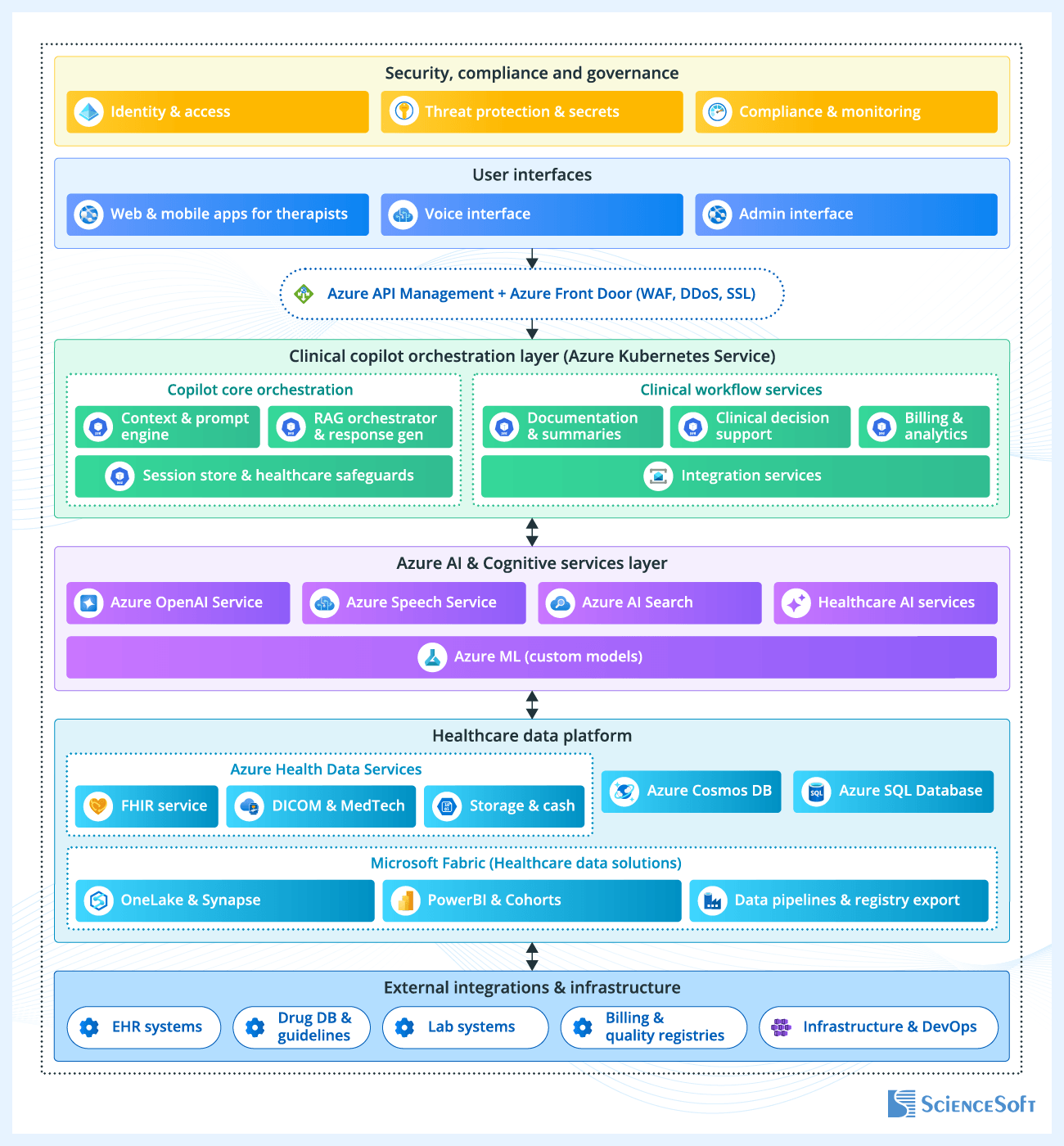

ScienceSoft’s engineers have prepared a sample architecture that shows how AI can be integrated into mental health EHR systems to support everyday clinical and administrative work. The architecture focuses on provider-facing workflows such as session documentation, reviewing patient histories, clinical decision support, and billing. The example is built on Microsoft services, but the same principles can be applied using other platforms and tools, or adapted for organizations with different levels of digital maturity.

Rather than relying on a single AI component, the system distributes functionality across several services aligned with specific tasks. Documentation support, decision support logic, and billing-related processes operate as separate layers. This makes it easier to apply different controls depending on the workflow (e.g., enforcing stricter validation for clinical inputs while allowing more flexibility in drafting notes) and to update individual AI capabilities without reworking the rest of the system.

When generating documentation or summaries, the AI does not rely on a general understanding of mental health concepts. Instead, it builds each response from the specific information relevant to the task. To do this, the system first identifies which patient and session the request refers to and limits the scope of the operation to that context. It then gathers supporting data from prior session notes, structured record fields, and relevant guidelines. This information is combined into a focused input for the model, and the output remains tied to these sources so clinicians can review how it was formed.

Before being used in clinical workflows, AI-generated summaries or suggestions always appear as draft content that requires therapist review. For example, session notes, extracted insights, or suggested documentation updates are shown alongside supporting context so therapists can confirm that the interpretation accurately reflects the patient’s statements. In workflows involving structured assessments or decision support, predefined validation rules can be applied before results are surfaced, with final decisions remaining fully under clinician control.

AI workflows introduce additional data exposure points, such as prompts, retrieved records, and generated outputs. To manage this, the system enforces role-based access at each step of the interaction and logs inputs, retrieved data, and outputs as part of a single trace. This way, unauthorized users cannot accidentally access protected data through AI-generated responses, and all AI-assisted actions can be audited and reviewed when needed.

Real-Life Examples of AI in Mental Health

|

|

In April 2024, WHO launched S.A.R.A.H., a digital health promoter prototype. Powered by generative AI, S.A.R.A.H. features an enhanced empathetic response capability. Utilizing new language models, it provides 24/7 engagement on various health topics, including mental health. S.A.R.A.H. is accessible in 8 languages and available on any device. |

|

|

Canary Speech, a digital healthcare startup, developed a platform that uses AI to measure stress, mood, and energy via voice analysis. By capturing and processing subtle changes in tone, pitch, rhythm, and other vocal features, it identifies indicators of cognitive decline, neurological conditions, mental health disorders, etc. |

|

|

Blueprint is an AI-powered therapist assistant that can be integrated into an EHR system. It transcribes in-person and telemedicine therapy sessions to generate customizable notes and treatment plan drafts. |

Technologies ScienceSoft Uses to Build AI for Mental Health

ScienceSoft's software engineers and data scientists prioritize the reliability and safety of medical chatbots and use the following technologies.

Machine learning platforms and services

AWS

Azure

Machine learning frameworks and libraries

Frameworks

Libraries

Bot platforms

Mental Health AI Challenges and How to Tackle Them

Designing AI workflows in mental health settings

Providers should not rely on open-ended AI for all tasks. For steps that require consistency and clear rules, it is more reliable to use predefined logic. For example, intake questionnaires, symptom checklists, or triage flows can follow fixed question sequences, required fields, and pre-established thresholds. This ensures that critical parts of the workflow behave predictably and do not depend on how the model interprets input.

When AI is used to interpret free-text input, ScienceSoft recommends using confidence thresholds to guide the interaction. If confidence in the interpretation is low, the system can ask follow-up questions to elicit additional information (e.g., how long a symptom has been present or how often it occurs). If uncertainty remains, they can switch to pre-built response options or ask the user to rate the intensity of a certain symptom on a scale.

Before full deployment, it is a good practice to run pilots and compare AI outputs with clinician assessments to identify common gaps or failure cases. During ongoing use, providers should monitor how the system handles ambiguous or inconsistent input and flag recurring issues. If problems persist, these findings can be shared with the vendor for further improvement.

Ensuring accuracy in AI-powered mental health applications

AI models in mental health applications need to handle subjective, often unclear user input. If the system misinterprets tone, intent, or symptom severity, it can produce misleading outputs and reduce trust in the product.

A practical approach for product teams is to train models on real mental health interactions. This includes therapy transcripts, patient-reported inputs, and conversational data with slang, incomplete thoughts, and contradictions across multiple turns. We recommend using supervised fine-tuning on this type of data and incorporating clinician-reviewed examples to improve how the model interprets these signals.

Evaluation should also reflect how the product is used in practice. For example, you can test how the model handles inconsistent answers across a conversation or different ways people describe the same symptoms. A good way to do this is to build evaluation sets from annotated conversations and compare model outputs with clinician judgments to identify common failure cases.

To improve the system over time, include built-in feedback mechanisms that allow clinicians to flag incorrect or unclear outputs directly in the interface. This feedback can then support further training and evaluation without relying on manual or non-compliant data sharing.

Users may mistake AI chatbots for real therapy, which could lead to financial and reputational risks for the chatbot’s founders

Mental health chatbots must clearly communicate their limitations, such as their inability to replace human therapists. Misleading claims about "forming therapeutic bonds" or relying on "proven methods" like CBT may give the user a false impression that an AI chatbot can provide actual psychotherapy. Regular reminders should highlight the need for in-person therapy and clarify the restricted care AI tools provide. Chatbots should offer an opt-out option, connecting users to a human therapist when needed.

Why Choose ScienceSoft as Your AI Development Partner

- In AI development services since 1989 and in healthcare IT since 2005.

- AI consultants and developers with 7–20 years of relevant experience and competencies in major ML technologies, frameworks, and libraries.

- Hands-on experience with HIPAA, HITECH, FDA, MDR, GDPR regulatory requirements.

- ISO 13485, ISO 9001, and ISO 27001 certifications to ensure high-quality AI solutions and full security of the clients’ data.

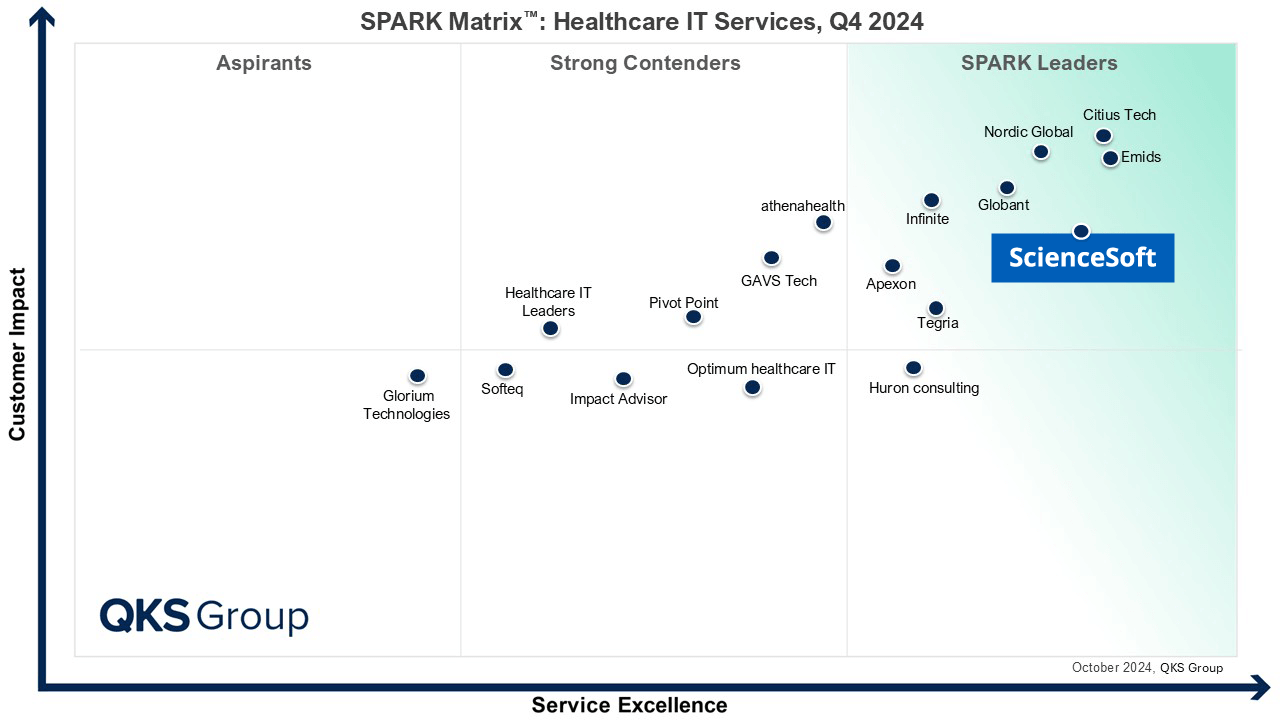

Featured among Healthcare IT Services Leaders in the 2022 and 2024 SPARK Matrix

Microsoft Solutions Partner for Data & AI

Named among America’s Fastest-Growing Companies by Financial Times, 5 years in a row

Recognized for Healthcare Technology Leadership by Frost & Sullivan in 2023 and 2025

Top Healthcare IT Developer and Advisor by Black Book™ survey 2023

Four-time finalist across HTN Awards programs

HIMSS Gold member advancing digital healthcare

ISO 13485-certified quality management system

ISO 27001-certified security management system

How Much Does AI-Driven Software for Mental Health Cost?

The development costs of an AI-powered solution for mental health range from $70,000 to $2,000,000 depending largely on the software type and functional scope. Other cost drivers include:

- Algorithm complexity and expected accuracy.

- Required extent of data cleaning and preprocessing.

- Number of data sources and volume of data.

- Integrations with other systems and medical devices.

- Non-functional requirements (usability, performance, security, etc.).

- Compliance requirements (HIPAA, GDPR, FDA, etc.).

$70,000–$250,000

For an AI chatbot that provides informational support and emotional aid in non-clinical settings.

$200,000–$300,000

For an AI-powered meditation app with tailored meditation plans, goal setting, progress tracking, etc.

$300,000–$600,000+

For a medical chatbot offering complex diagnostics or clinician support.

$300,000–$800,000+

For an EHR-integrated digital therapeutics (DTx) solution with AI-powered treatment planning and real-time patient monitoring features.

$400,000–800,000+

For a custom AI-powered EHR system with features like dictation, virtual assistance, and smart billing.

$600,000–$2,000,000

For an advanced EHR system with AI-powered clinical decision support, smart treatment plan generation, and predictive analytics capabilities.