Q1 2026 Healthcare AI Trends: Agents Can't Keep Up With GenAI, Patients Turn to Chatbots Before Clinicians

AI adoption in healthcare is becoming more selective. In Q1 2026, ambient documentation tools were scaling across health systems, while diagnostic AI remained concentrated in imaging, and agentic AI adoption was still limited by governance and deployment challenges. Drawing on ScienceSoft’s Q1 2026 Healthcare AI Market Watch and recent project experience, we highlight where AI is already delivering measurable impact, where adoption is stalling, and what this means for healthcare organizations planning their next steps.

Key takeaways:

- Ambient AI is reaching system-level adoption, but scaling exposes new challenges in standardization across specialties and workflows.

- Diagnostic AI remains concentrated in imaging, where tools are easier to validate and integrate, while adoption in other clinical areas is still limited.

- Agentic AI adoption remains limited, as action-based use cases require deeper system integration, higher access rights, and stricter governance, making them harder to deploy at scale.

- Clinicians are actively using AI, even outside enterprise control, creating a growing governance gap.

- Patients are increasingly turning to AI as a first point of contact, especially outside clinical hours, reshaping expectations of access and communication.

Ambient AI Keeps Maturing: Large Deployments Start Delivering System-Level Results

In early 2026, large-scale ambient AI deployments are beginning to show measurable results across entire health systems, not just in pilot settings. As noted in ScienceSoft’s Q4 2025 trend watch, ambient AI was the first clinical AI category to reach broad deployment in 2025. Now, these implementations are moving into a more mature phase of use. What started as a way to reduce documentation time is becoming part of how clinical work is structured and recorded at scale.

Recent large-scale deployments illustrate this transition. Houston Methodist rolled out an ambient AI platform across ambulatory, emergency, and inpatient care, using it to generate structured clinical documentation at scale. The system reportedly reduced documentation time by 40%, increased the time spent with patients by 27%, and cut after-hours work by 33% for clinicians. They were also able to close encounters faster and see more patients per day.

Another marker of the use case’s maturity is EHR-native ambient documentation moving into day-to-day use. Group Health Cooperative of South Central Wisconsin became the first organization to implement Epic’s AI Charting tool in clinical practice. The system generates visit notes in real time with patient consent, while clinicians remain responsible for reviewing and finalizing documentation. Early reports from Epic include up to 60 minutes saved per day per doctor and improvements in both clinician experience and patient interactions.

At the same time, ambient AI is beginning to split by specialty. In specialty workflows, general-purpose scribing often falls short, calling for specialized tools that understand jargon and niche clinical notation scenarios. For example, Nextech’s Cora Scribe was designed specifically for ophthalmology, with built-in exam structures, terminology, and alignment with how records are maintained in that field. This reduces the need for post-editing of AI-generated documentation and helps avoid inconsistencies in patient records.

Scaling ambient AI also exposes a new limitation: documentation quality becomes harder to standardize across different clinical contexts. As deployments expand across settings and departments, variations in workflows, terminology, and documentation standards start to affect output. A note that works well in one setting may require significant correction in another.

This creates a trade-off between specialization and standardization. Specialty-specific tools can produce higher-quality output in more uniform environments, such as single-specialty practices, where workflows and documentation requirements are consistent. In larger, multi-specialty organizations, however, the challenge shifts. Large providers are more likely to adopt general-purpose systems that can be adapted across departments and integrated into enterprise EHR platforms. These systems offer broader coverage, but require more configuration and manual post-editing of the generated content to handle variation across specialties.

In our work, we usually see the hybrid approach work best in most scenarios: one core ambient AI platform, plus a few specialized tools where they really make a difference. In large health systems, the core platform is often something that integrates cleanly with enterprise EHR environments such as Epic or Oracle Health and can support common use cases like ambient scribing, visit summarization, and structured note drafting. Depending on the case, that may mean tools such as Microsoft Dragon Copilot, Abridge, or the EHR vendor’s own embedded capabilities.

At the same time, there are areas where specialization clearly pays off. Radiology and pathology are good examples, and some oncology use cases as well. You typically see it when workflows are so specific that the reduction in editing time from implementing a specialty tool is immediately measurable. But the more specialized tools you add, the faster you’ll run into integration overhead and data fragmentation. So for most providers, it makes sense to start with a configurable core platform and only add specialty tools where the ROI is measurable.”

Vadim Belski, Head of AI, Principal Architect, ScienceSoft

Diagnostic AI Grows Through Imaging, One Use Case at a Time

Diagnostic AI in healthcare remains concentrated in medical imaging. In Q1 2026, this is reflected in both ongoing regulatory clearances and increased policy attention to how clinical AI should be used in practice. Imaging has long accounted for the majority of FDA-cleared AI tools, and new solutions continue to emerge within this space.

One example is Median Technologies receiving FDA 510(k) clearance for its eyonis® LCS, a computer-aided detection and diagnosis solution for lung cancer screening using low-dose CT. This reflects the continued expansion of AI into defined clinical programs such as screening, where tools are expected to operate within established radiology workflows and deliver consistent, repeatable outputs.

This trend is also reflected at the policy level. In December 2025, the US Department of Health and Human Services (HHS) launched a department-wide Request for Information (RFI) on AI to coordinate its approach to adoption across clinical care. A month later, the Assistant Secretary for Technology Policy (ASTP/ONC), which oversees federal health IT policy in the US, issued a separate RFI focused specifically on diagnostic imaging. RFIs are used to gather input from industry on how technologies should be implemented and regulated. The fact that imaging is addressed as a distinct area suggests that regulators see it as one of the more immediate and actionable domains for clinical AI deployment.

The same pattern appears in the industry perception of diagnostic AI. In a 2026 survey by HealthTech Magazine, radiology was the only diagnostic AI application cited as highly successful, with respondents highlighting its use in identifying time-sensitive conditions such as intracranial hemorrhage, pulmonary embolism, and vessel occlusion. A JAMIA study of health systems, published a year earlier, also found that imaging was the most widely deployed clinical AI use case at the time, with more limited adoption in other areas.

Imaging has been the natural starting point for diagnostic AI for several reasons. The data is already structured and standardized: formats like DICOM make it much easier to train models at scale. You also tend to have clearer ground truth, for example, whether a tumor is present or not, which is much harder to define in other clinical areas. Most use cases in imaging are quite focused, like detecting a specific condition, so they’re easier to validate and get through regulatory review. The workflows are also already digital and relatively self-contained, which makes integration simpler and less disruptive for the rest of the system. Another important factor is that AI in imaging usually supports clinicians rather than replacing their decisions, so the risk profile is lower. In areas like primary care, you’re dealing with unstructured data, much more context, and a lot more ambiguity, which makes both development and approval much more difficult.”

Hadeel Abu Baker, Senior Healthcare IT Consultant at ScienceSoft

Agentic AI Adoption Is Lagging Behind — And The Market Is Shifting To Make It More Deployable

Agentic AI adoption in healthcare is growing more slowly than for other types of AI. Agentic AI refers to systems that can take independent actions within workflows, not just generate drafts or recommendations. In healthcare, this includes tasks such as updating records, scheduling appointments, or triggering downstream processes based on patient data.

Forrester notes that AI agents have high potential impact in healthcare, particularly in addressing workforce shortages. It points to use cases such as maintaining provider directories to improve access to care and gathering documentation for prior authorizations and appeals to reduce delays and administrative costs.

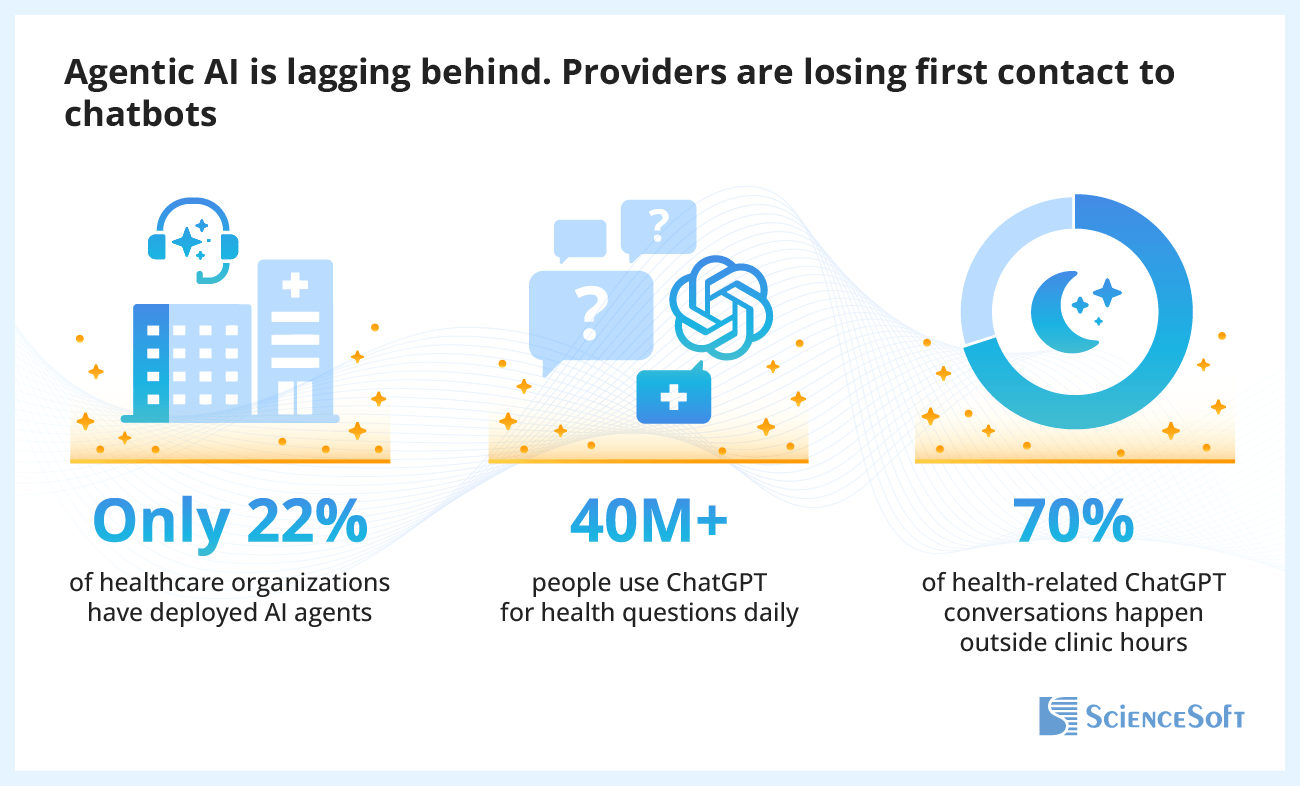

At the same time, the adoption of agentic solutions remains more limited than for other types of AI. According to NVIDIA’s 2026 State of AI in Healthcare and Life Sciences report, 69% of healthcare organizations are using generative AI, while only 22% are using AI agents.

A major reason is the level of autonomy involved. Unlike other AI tools, agents require access to core systems and the ability to act on data, which introduces higher risks and stricter governance requirements. As a result, organizations tend to start with lower-risk, operational workflows, where errors are easier to detect and contain.

The main problem with agentic AI in healthcare is that you can never let it improvise. Providers are cautious for a reason: if an AI agent goes off-script, you are immediately dealing with privacy, compliance, and operational risks all at once. So, one key challenge is keeping its actions predictable.

In a recent project for the Amazon Nova Partner Demo Competition, we built a voice agent for appointment scheduling that integrates with EHR systems and handles patient calls end-to-end in real time, from identity verification to booking. To reduce free-form model decisions, we set up separate functions for each step of the interaction (identity verification, provider availability checks, or appointment booking). In practice, that means the agent cannot improvise its way through the call: at each point, it is limited to a specific action path we defined in advance. The agent verifies the patient’s identity first thing during each call, and if verification fails, the interaction is immediately handed off to a human operator. We also limited its scope to only scheduling data, with no access to clinical or billing information. This makes it possible to automate routine interactions while keeping control over what the agent can do. In testing, this setup was able to process up to 70% more calls per hour than a patient service representative.”

Vadim Belski, Head of AI, Principal Architect, ScienceSoft

Currently, agentic solutions are often built as one-off implementations because they depend heavily on each provider's IT environment and operational policies. However, vendors are increasingly focusing on making agent development more repeatable. For example, Greenway Health introduced Agentic AI Factory, a platform designed to standardize how agents are built, validated, and deployed within healthcare environments. Instead of developing each agent as a separate project, this solution treats them as part of a continuous pipeline, with built-in controls for compliance, security, and explainability. According to the company, this allows new agents to be deployed in weeks rather than months, particularly for operational workflows such as patient registration and billing.

Doctors Are Already Using AI, Hospitals Are Struggling to Catch Up

As of Q1 2026, clinical AI adoption is mostly happening outside formal enterprise control. In a survey of 54 hospitalists at a large academic tertiary care hospital, 66.7% reported using AI in clinical practice for tasks closely tied to clinical reasoning, including generating differential diagnoses, selecting tests or treatment options, confirming suspected diagnoses, and creating patient education materials. At the same time, none of the respondents reported using corporate tools; instead, they relied on low-cost or free public solutions.

Looking at how these tools are selected adds another layer to this pattern. In the survey, use of healthcare-specific platforms was reported far more frequently than general-purpose tools (51.9% for OpenEvidence vs. 7.4% for ChatGPT), indicating a preference for sources better aligned with clinical practice. However, these tools are still accessed outside any managed environment.

As a result, a governance gap is emerging. AI is already becoming part of clinical workflows, but without standardized controls for accuracy, explainability, or accountability. For healthcare organizations, the question is no longer whether to adopt AI for clinicians — it is how to control AI use, since it is already happening in clinical practice, regardless of policy. The priority now is to replace informal usage with approved tools that can support the same workflows under clear governance.

I’d note that this survey reflects a single organization and a relatively small sample, so it doesn’t necessarily represent the entire industry, nor does it prove clinician preference for non-enterprise AI tools. What it does show very clearly is that clinicians already want AI tools in their daily workflow, and if approved options are unavailable, many will use whatever is easiest to access. Because of that, trying to ban public AI altogether is usually less effective than giving people a basic controlled alternative.

Realistically, that can be as simple as approving one clinical evidence tool for questions that would otherwise go to OpenEvidence (for example, UpToDate Expert AI or Dyna AI) and one secure general-purpose assistant for drafting, summarization, and nonclinical workflow support. If documentation is a major use case, that second tool could also be something like Dragon Copilot, Abridge, or Suki. You do not need a full platform strategy on day one. A small approved stack, plus a short policy such as “no patient identifiers in public tools, only use de-identified data” already gets you to a much safer place than uncontrolled use.”

Hadeel Abu Baker, Senior Healthcare IT Consultant, ScienceSoft

Patients Turn to AI for Everyday Health Questions

General-purpose AI is increasingly used as an early source of health information. According to a 2026 report by OpenAI, more than 40 million people worldwide ask ChatGPT health-related questions every day. Around 70% of these interactions take place outside normal clinic hours, and usage is particularly high in rural areas, where users send over 580,000 health-related messages each week.

This pattern suggests that AI is becoming a readily available entry point for health-related questions, especially when access to care is limited. As a result, patient expectations are shifting toward faster responses, clearer explanations, and on-demand access to information.

What we’re seeing is that many patients now come in with some level of understanding already shaped by AI. They may have looked up symptoms or possible diagnoses before ever speaking to a clinician, and that influences both their expectations and the conversation. To respond to this, providers need to engage earlier in that process. One way is to offer their own AI assistants grounded in the organization’s clinical guidelines and approved educational materials, so patients get reliable information from the start rather than turning to generic tools.

At the same time, it’s important to adapt how clinicians interact with patients. Instead of dismissing AI-informed questions, they need to acknowledge them and work with that input. Even simple steps, like asking whether a patient has already used AI tools, can help provide context. There’s also a content challenge. If trustworthy patient education sources are hard to find or difficult to understand, people will default to asking AI. Making that content more accessible and easier to navigate is just as important as introducing new tools.”

Hadeel Abu Baker, Senior Healthcare IT Consultant, ScienceSoft

References

- Ambience Healthcare Announces Houston Methodist Enterprise Rollout of AI Platform to Enhance Patient Care and Clinical Workflows (CHIME, February 19, 2026).

- New Epic Artificial Intelligence Tool Transforms the Health Care Experience (PR Newswire, February 2, 2026).

- Nextech Pioneers New Era in Clinical Documentation with Launch of AI-Powered Cora Scribe (Nextech, January 27, 2026).

- Artificial Intelligence-Enabled Medical Devices (FDA, March 4, 2026).

- Median Technologies receives FDA 510(k) clearance for eyonis® LCS, the first AI tech-based detection and diagnosis device for lung cancer screening (Median Technologies, February 9, 2026).

- Request for Information: Accelerating the Adoption and Use of Artificial Intelligence as Part of Clinical Care (HHS, December 23, 2025).

- Request for Information: Diagnostic Imaging Interoperability Standards and Certification (ASTP/ONC, January 29, 2026).

- Tech Trends: Healthcare IT Leaders Get Real on the State of AI in 2026 (HealthTech Magazine, January 28, 2026).

- Adoption of artificial intelligence in healthcare: survey of health system priorities, successes, and challenges (JAMIA, May 5, 2025).

- The Forrester Wave™: Customer Experience Platforms For Healthcare, Q1 2026: The AI Race Is On, But Pace Is Uneven (Forrester, March 5, 2026).

- State of AI in Healthcare and Life Sciences: 2026 Trends (NVIDIA, February, 2026).

- Greenway Health Launches Agentic AI Factory to Redefine the Future of Healthcare Technology (Greenway Health, January 20, 2026).

- Patterns of AI Use in Clinical Work by Hospitalists: Survey Study (JMIR Publications, October 15, 2025).

- AI as a Healthcare Ally: How Americans are navigating the system with ChatGPT (OpenAI, January, 2026).