Data Warehouse Design

A Full Guide

Since 2005, ScienceSoft’s data warehouse consultants have been helping companies design scalable and high-performing data warehouses to consolidate disparate data sources for analytical querying and reporting.

Data Warehouse Design: the Essence

Data warehouse design is creating the DWH architecture that would enable the integration, structuring, and storing of business data for analytical querying and reporting. This process also implies ensuring data quality through defining reliable data transformation and cleansing procedures.

ScienceSoft considers data warehouse design a crucial step in implementing a data warehouse solution, as at this stage we lay the foundation of the software-to-be.

Key 7 steps to data warehouse design

- Engineer requirements.

- Discover data needs.

- Conceptualize data warehouse.

- Plan the project.

- Select data warehouse technologies.

- Analyze the system and design data governance.

- Model data and design ETL processes.

- Project time: From 2 months.

- Cost: Starts from $40,000. Use our online calculator to get a tailored estimate.

- Team: A project manager, a business analyst, a data warehouse system analyst, a solution architect, a data engineer.

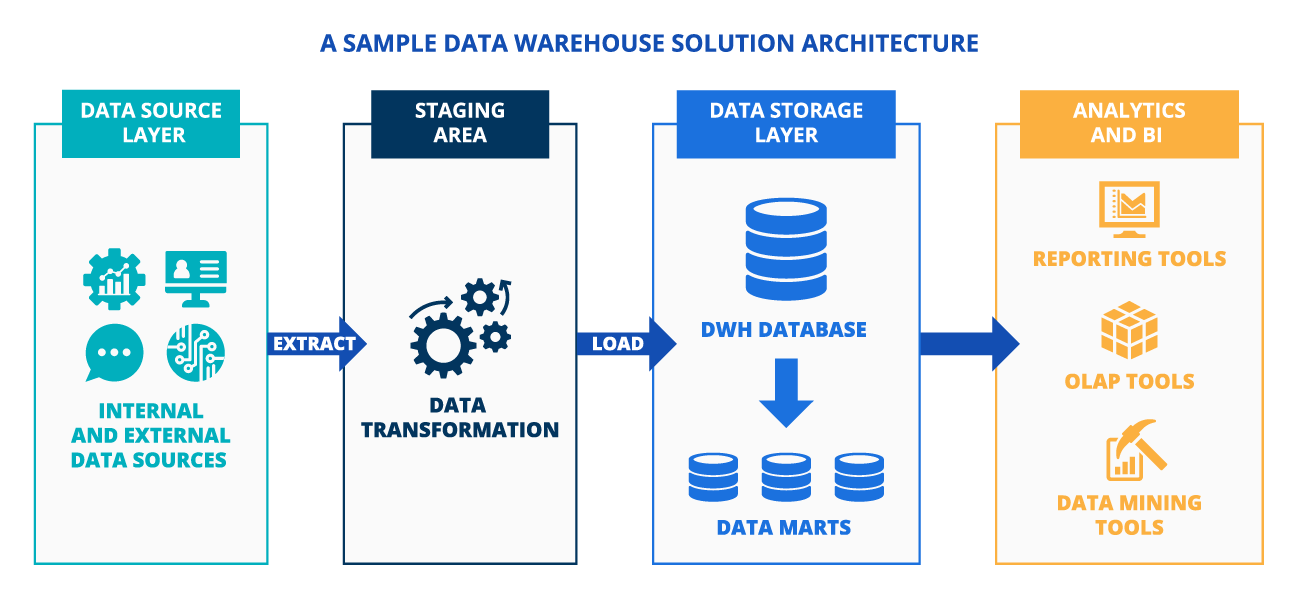

Data Warehouse Solution Architecture

A typical data warehouse architecture includes:

Data source layer

– internal and external data sources (ERP, CRM, sensor devices, social media, public databases, etc.) providing data fed into the data warehouse.

Staging area

– a temporary repository where records from data source systems undergo consolidation and processing before loading into the storage area. The staging area may be absent when data transformation goes in the target database (data warehouse/data marts).

Data storage layer

– hosting a data warehouse database – permanent data storage that keeps slightly and highly structured data, and data marts – data warehouse subsets providing information for reporting and analysis for a company’s specific business line, department, or team.

Analytics and BI

– the data in the data warehouse database and data marts can be queried via OLAP tools, data mining tools, reporting and visualization tools.

Data Warehouse Design Steps

A data warehouse design process and its duration depend on:

- Source system complexity and quality.

- Data analytics complexity.

- Data security complexity, etc.

Based on ScienceSoft's ample experience in designing and implementing data warehousing solutions, we list core steps needed to design a data warehouse solution.

|

|

Note: The timeframes below are highly approximate, as, for example, the architecture design project for an enterprise-level data warehouse may last up to 3-6 months and even more because of the project scale and specificity. |

1.

Data warehouse requirements engineering

- Determining current and future business needs to meet with a data warehouse project.

- Identifying data warehouse users’ goals and expectations.

- Determining security and compliance needs, etc.

2.

Discovery

- Preliminary data source analysis (number of data source systems to be integrated, source data volume and complexity, etc.)

- Identifying a number of potential users and their location.

- High-level review of necessary security and compliance requirements, etc.

During the discovery step, our consultants analyze relevant documentation, interview and hold brainstorming sessions with all stakeholders to collect their needs, goals, and vision of the successful data warehousing project implementation. It helps understand their priorities, plan the development process accordingly and as a result - provide a satisfactory end product.

3.

Data warehouse conceptualization

- Describing the main components of the data warehouse solution.

- Choosing between on-premises and in-cloud data warehouse deployment and outlining cloud deployment options (public, private, hybrid cloud, multi-cloud).

- Choosing an optimal option for the data warehouse solution architecture (Inmon vs. Kimball approach).

- Defining the potential of the selected architecture in solving business problems.

In our projects, we set up close cooperation of business users with a BA and a solution architect while defining the core and advanced functionality of the future solution to avoid overcomplicating the data warehouse architecture.

4.

Data warehouse design project planning

- Defining data warehouse design project scope, deliverables and timeline.

- Data warehouse design project resource and budget planning.

- Data warehouse design project risk management and risk mitigation strategies development.

Our practice has shown that effective data warehouse design project planning can help reduce project time and budget by up to 30%. To achieve that, we carefully elaborate on the findings of the preceding stages.

5.

Data warehouse technologies selection

Selecting data warehouse solution technologies for each of the data warehouse solution components (data integration tools, a database, etc.), taking into account:

- Current analytics infrastructure environment (if any).

- Data source systems.

- In-house data warehouse experts’ competencies.

- Data security strategy, etc.

6.

Data warehouse system analysis and data governance design

Detailed analysis of each data source:

- Data type and structure, data volume generated daily.

- Degree of data sensitivity and applied data access approach.

- Data quality, missing/poor data, the possibility to perform data cleansing in the data source system.

- Relation to other data sources, etc.

Setting up data governance framework by creating:

- Data quality criteria and data cleansing policies.

- Data access and usage policies, data security policies (data access policies, data encryption policies, data backup strategy, etc.).

7.

Data warehouse data modeling and ETL/ELT design

Designing data models for the data warehouse and data marts:

- Identifying entities, key attributes of each entity, relationships between entities.

- Mapping attributes to entities.

- Converting the logical data model into the tables, columns, indexes, keys of the database.

- Validating data models.

The common data models to choose from are:

- Star schema – the center of the star is a fact table surrounded by a number of associated dimension tables.

- Snowflake schema – an extension of the star schema (each dimension table is surrounded by additional dimension tables).

- Galaxy schema – contains two fact tables sharing dimension tables between them.

Designing ETL/ELT processes for data integration and data flow control.

To create a blueprint for the data ecosystem fully tailored to the customer’s particular business needs, ScienceSoft engages senior-level system analysts with considerable experience in the corresponding industry.

|

|

Note: The next steps would be data warehouse development and launch, which are not addressed within the framework of this guide. In case you are interested in the end-to-end data warehouse implementation process, explore our structured overview of the data warehouse implementation process. |

Consider Professional Data Warehouse Design and Implementation Services

With 20 years in data warehousing services, ScienceSoft helps you design and implement a cost-effective data warehouse solution meeting your tactical and strategic business needs.

Data warehouse design

- DWH requirements engineering.

- DWH design project planning.

- DWH solution conceptualization and architecture design.

- DWH software selection.

- DWH system analysis and data governance design.

- Design of data models and ETL/ELT process.

Data warehouse implementation

- DWH requirements engineering.

- DWH solution conceptualization and platform selection.

- DWH architecture design.

- DWH solution development.

- DWH quality assurance and launch.

- DWH support and evolution.

What Makes ScienceSoft a Trustworthy Partner

- Data warehousing services since 2005.

- Data analytics expertise since 1989.

- Designing and implementing business intelligence solutions since 2005.

- A dedicated team of DWH solution architects, data engineers, DevOps specialists, database administrators, QA specialists.

- Expertise in delivering complex and large-scale solutions for 30+ industries, including healthcare, banking, lending, investment, insurance, retail, ecommerce, professional services, manufacturing, transportation and logistics, energy, telecommunications, and more.

- Quality-first approach based on a mature ISO 9001-certified quality management system.

- ISO 27001-certified security management based on comprehensive policies and processes, advanced security technology, and skilled professionals.

ScienceSoft as a Trusted Data Warehousing Tech Partner:

When we first contacted ScienceSoft, we needed expert advice on the creation of the centralized analytical solution to achieve company-wide transparent analytics and reporting.

The system created by ScienceSoft automates data integration from different sources, invoice generation, and provides visibility into the invoicing process. We have already engaged ScienceSoft in supporting the solution and would definitely consider ScienceSoft as an IT vendor in the future.

Heather Owen Nigl, Chief Financial Officer, Alta Resources

Typical Roles in ScienceSoft's Data Warehouse Design Projects

Project manager

End-to-end data warehouse design project management:

- Defines data warehouse design project scope, goals and deliverables.

- Develops the data warehouse design project plan and communication approach.

- Communicates data warehouse design project purpose and expectations to stakeholders.

- Estimates and coordinates the efforts of data warehouse design project team members.

- Ensures timelines and quality of the data warehouse design project deliverables within the set budget frames.

Business analyst

- Analyzes the needs of key stakeholders and end users and translates the needs into the data warehouse requirements affecting design (e.g., the data warehouse solution should support operational analytics).

- Describes the scope of the data warehouse system, its modules, and integrations with other software.

Solution architect

- Designs a data warehouse architecture based on business and technology requirements.

- Ensures the architectural requirements (availability, scalability, performance, reliability, etc.) are implemented in the data warehouse design.

- Suggests a technology stack.

Data warehouse system analyst

- Examines data sources and data analytics software (if any) to be integrated into the data warehouse solution.

- Draws up a system requirements specification for creating data models, designing ETL/ELT processes, etc.

- Defines data integrity and data cleansing rules, etc.

Data engineer

- Designs a data model and its structures and draws up the data flows.

- Designs ETL/ELT processes.

Sourcing Models

All in-house

Pros: The company has full control over the data warehouse design project.

Caution: Risk of project delays/failure due to the shortage of resources.

Outsourcing of technical resources

The company owns the data warehouse design project management while relying on outsourced resources to perform data warehouse platform selection, data warehouse solution architecture design and data modeling, etc.

Pros: No risk of the technical resources overprovisioning after the project completion.

Caution: The model requires constant cooperation of all team members. High requirements for in-house PM and BA competencies.

Complete outsourcing (in-house project sponsor, everything else is outsourced)

The company communicates its data warehouse-related needs to a vendor, who takes on detailed data warehouse requirements engineering, business planning, systems analysis, data warehouse design, etc.

Pros: No data warehouse project delays or failures due to resource unavailability.

Caution: Increased vendor dependency.

Benefits of Data Warehouse Design with ScienceSoft

ScienceSoft's #1 priority is to reach project goals regardless of time and budget constraints. Explore our project management practices to see how we can drive your initiative to success.

Data warehouse services from A to Z

With our 20 years of experience in business intelligence, we are eager to deliver any DWH-related service to you, be it design, implementation, support, data management, security, etc.

Traditional BI + big data

Our team is equally competent to design and implement data warehouses for both traditional and big data analytics solutions.

Multi-industry experience

Having expertise in 30+ industries, we know how to design our data warehouse solutions to meet our clients' individual needs.

Get all the information you need to choose an optimal data warehouse technology for your project in our free guide.

Data Warehouse Design Cost

The cost of data warehouse design may start from $40,000. The major cost drivers include:

- The number of data sources (ERP, CRM, SCM, etc.), data disparity across different sources (e.g., the difference in the data structure, format), data source complexity.

- Data volume to be processed and stored.

- Source data quality (low-quality data requires sophisticated data cleansing procedures).

- Required data security level.

- Data warehouse velocity, scalability, and fault tolerance requirements.

The cost provided above is a starting quote for designing a 10GB data warehouse solution, which involves data transformation and data cleansing processes.

Want to get a tailored quote for your case? Use our online calculator.

About ScienceSoft

ScienceSoft is a global IT consulting and software development company headquartered in McKinney, TX, US. Since 2005, we’ve been providing data warehousing services, including data warehouse consulting, to help our clients build robust analytics with scalable and effective data warehouse solutions designed in accordance with their particular business needs. With robust security management supported by ISO 27001, we guarantee cooperation with us does not pose any risks to our clients' data security.